Conference Call Transcription: A Complete How-To Guide 2026

You finish a long conference call, jump into the next task, and then someone Slacks you an hour later asking, “What did finance agree to?” The recording exists somewhere. The answer exists somewhere in that recording. But now someone has to scrub through a noisy hour of audio, decode side conversations, and guess which comment turned into the final decision.

That's why conference call transcription matters. Not as a convenience feature, but as operational infrastructure. A transcript turns a meeting from a fading memory into something searchable, reviewable, and reusable. When the process is set up well, the transcript becomes more than notes. It becomes a record of decisions, a source for follow-ups, and a shared reference point for people who weren't in the room.

Transcription failures rarely stem from poor software. Instead, these issues occur because the call was difficult to transcribe initially, settings were incorrect, speaker labels remained unreviewed, and the output was never converted into action. By addressing these factors, conference call transcription ceases to be a disorganized administrative burden.

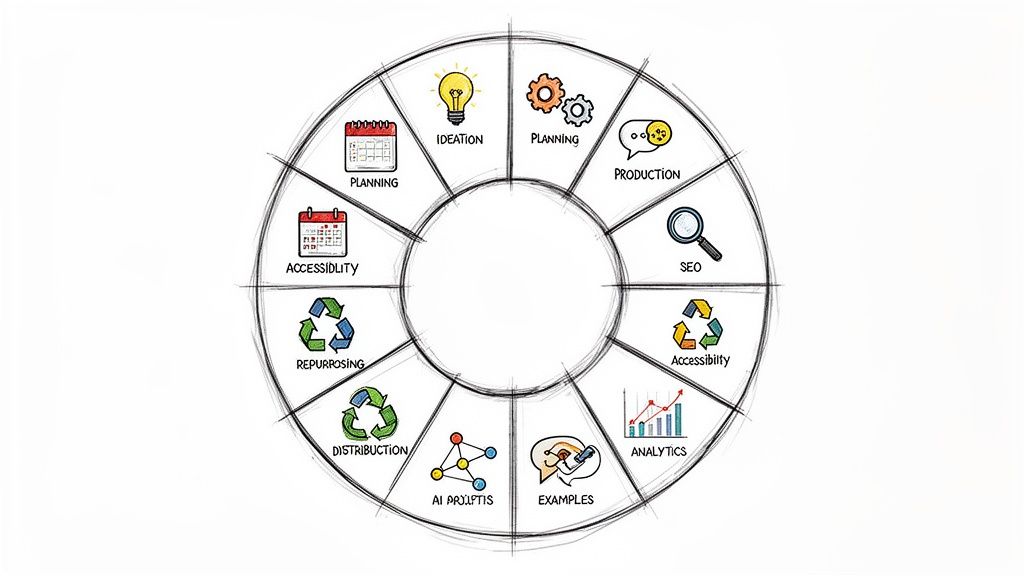

Why Master Conference Call Transcription Now

A lot of teams still treat transcripts like an optional add-on. That made sense when meeting notes lived in one person's notebook and everyone else relied on memory. It doesn't make sense now.

Remote and hybrid work changed the cost of losing information. People are in more virtual conversations, and details slip faster when discussion happens across calls, chats, and follow-up documents. The market reflects that shift. The AI meeting transcription market is projected to grow from $3.86 billion in 2025 to $29.45 billion by 2034, at a 25.62% CAGR, driven in part by remote workers attending 4 to 5 meetings per week and struggling to retain key details from them, according to meeting transcription adoption statistics from Sonix.

That growth matters because it signals a change in expectations. Teams aren't just asking whether they can produce a transcript. They're asking whether they can trust it, search it, label speakers correctly, and use it fast enough to support real work.

If you need a quick primer on the broader category, this overview of what audio transcription is is useful. But conference call transcription has its own demands. Multiple speakers. Overlap. Acronyms. Weak mics. Late joiners. Background noise from someone taking the call in a kitchen or airport lounge.

Practical rule: If your team makes decisions on calls, you need a transcription workflow. Not just a transcription tool.

The payoff isn't abstract. A solid transcript reduces the time people spend replaying audio, chasing clarifications, and reconstructing decisions after the fact. It also helps managers coach, onboard, and verify what happened in a client, vendor, or internal meeting.

Mastering this now isn't about being early. It's about not being late. Teams that know how to capture calls cleanly, transcribe them accurately, and turn them into usable records move faster because they stop redoing the same thinking twice.

Set Up Your Call for Transcription Success

Most transcription problems start before anyone clicks “record.” If the audio is messy, the transcript will be messy. You can fix some errors later, but not all of them.

Clean audio beats clever editing

The fastest way to improve conference call transcription is to improve the call itself. That usually means making a few boring choices on purpose.

Here's the good-versus-bad version of the basics:

Good mic choice: Use a dedicated headset or USB microphone.

Bad mic choice: Rely on a laptop mic across the room.Good environment: Close windows, silence notifications, and avoid speakerphone in open spaces.

Bad environment: Keyboard clatter, hallway noise, and echo from hard surfaces.Good meeting habit: One person speaks at a time, especially during decisions or action-item review.

Bad meeting habit: People jump in over each other because “we all know what we mean.”Good naming: Ask everyone to introduce themselves clearly at the beginning if the group includes new attendees.

Bad naming: Let the transcript guess who “yeah, I agree” belongs to.

The reason is simple. Speech models can handle a lot, but they can't recover words that never came through clearly in the recording.

Tune the platform before the meeting starts

Zoom, Teams, Meet, and similar tools all give you audio settings that affect transcript quality. Users often overlook them. They should.

Check these before important calls:

- Select the right microphone input. Teams often defaults to the wrong device after you plug in headphones or dock a laptop.

- Test gain and distance. If you're peaking, words distort. If you're too far away, consonants disappear.

- Turn off unnecessary sound effects. Virtual audio enhancements can introduce artifacts.

- Record a short sample. A one-minute test catches issues that a green mic icon won't.

For teams that record often, it helps to keep a simple SOP. This guide on how to record conversations is a good companion for standardizing that part of the workflow.

The best transcript usually comes from the least dramatic meeting setup.

A small pre-call routine saves real cleanup time later. That matters because transcription errors cluster. One bad mic doesn't just affect that speaker's words. It also confuses speaker attribution for everyone around them.

Run the meeting in a transcribable way

Conference calls become easier to transcribe when the host manages the room deliberately.

Use a few practical rules:

- Front-load introductions: Especially on cross-functional calls, get names and roles spoken clearly once.

- Call on people by name: “Sarah, can you walk us through procurement?” creates cleaner attribution than open discussion.

- Pause before topic changes: Short pauses help both listeners and transcription systems.

- Restate decisions: If someone says, “So we're agreed,” follow it with the actual decision in one sentence.

That last point matters more than people think. A transcript is only useful if the important moments are stated plainly enough to survive audio loss, overlap, and later review.

Later in the workflow, you can ask AI to summarize and extract action items. But if the source audio is muddy, even strong summarization won't rescue it.

For a quick visual walkthrough on recording cleaner source audio, this is worth a few minutes:

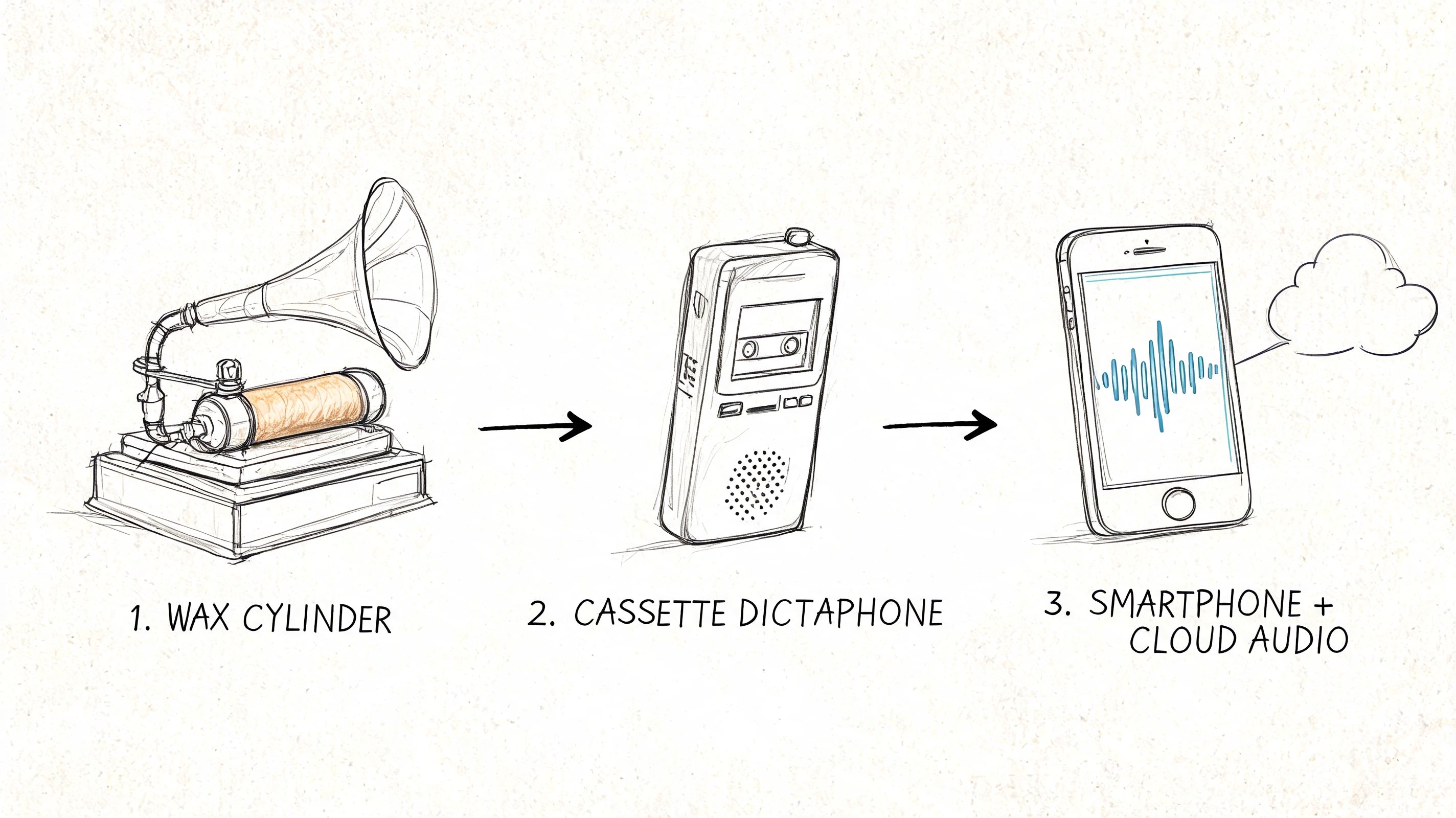

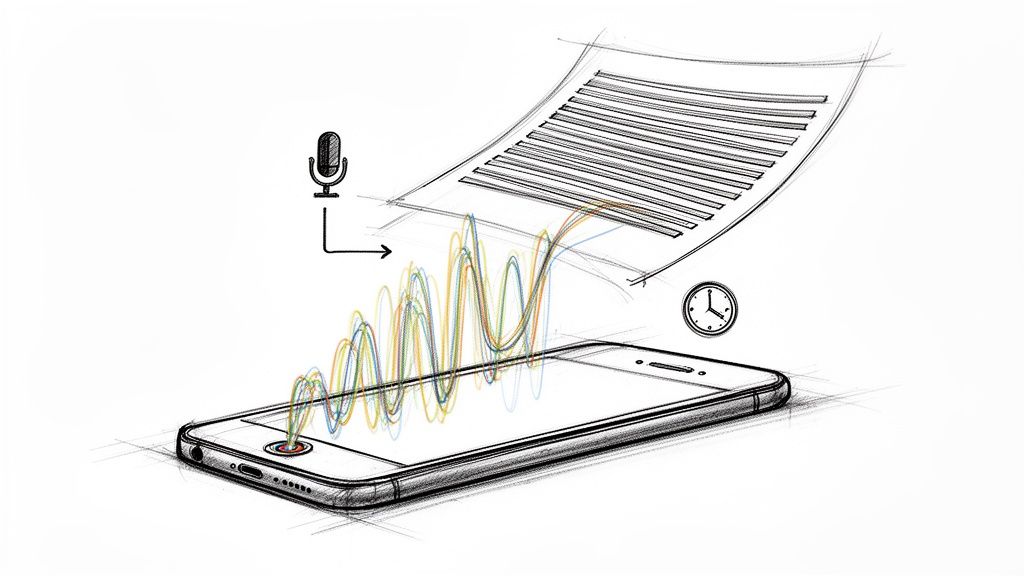

How AI Turns Your Audio into Accurate Text

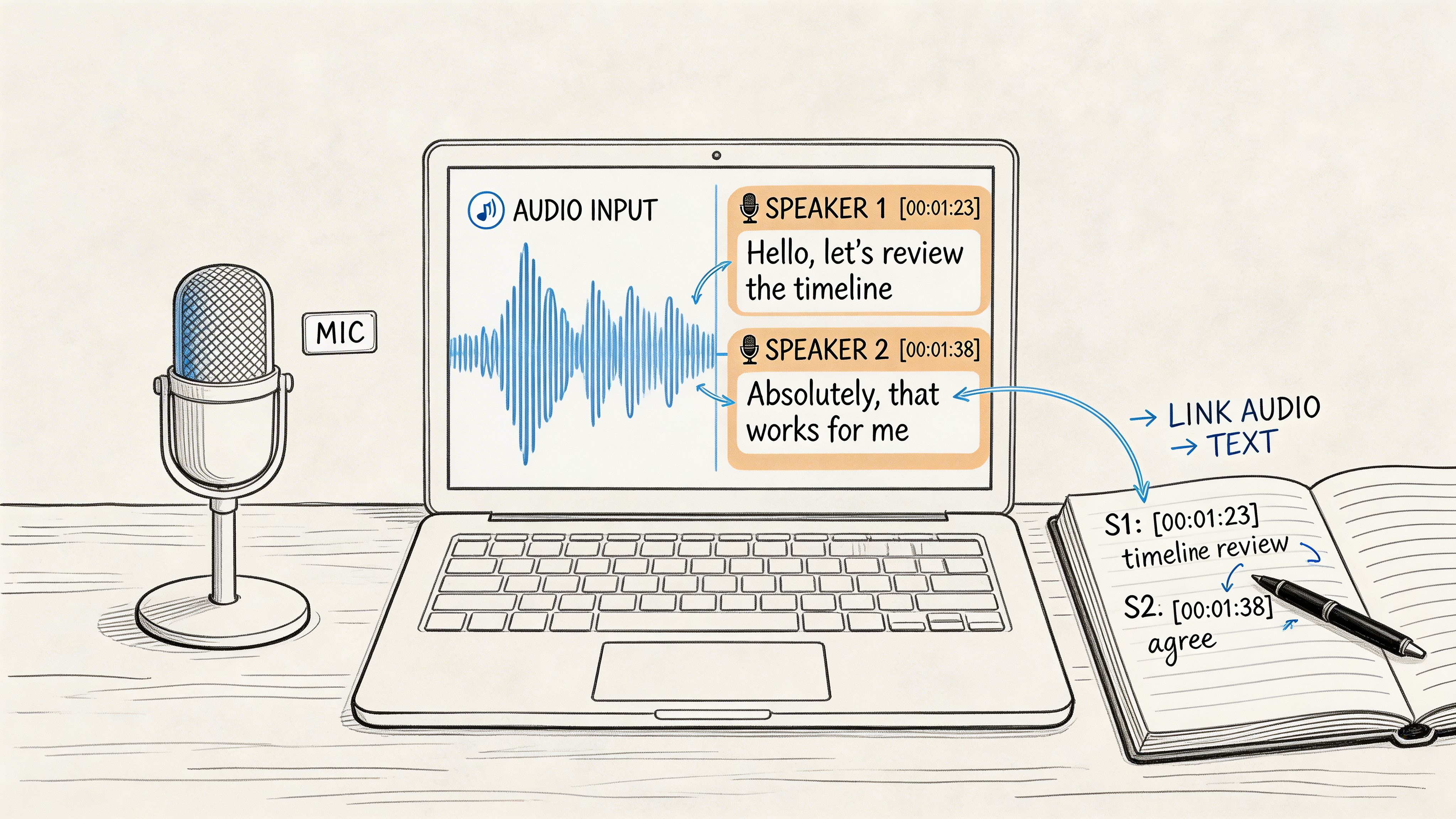

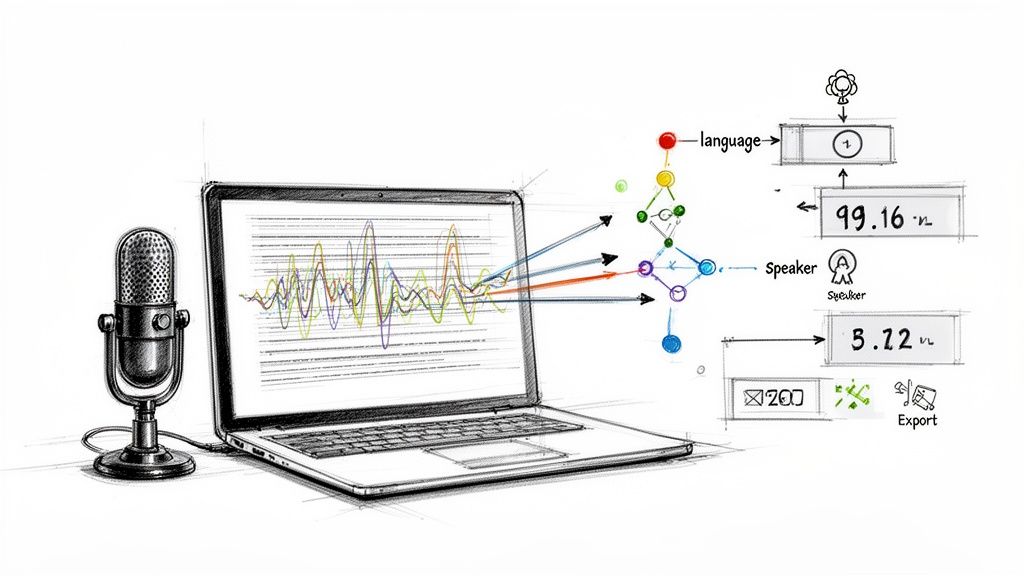

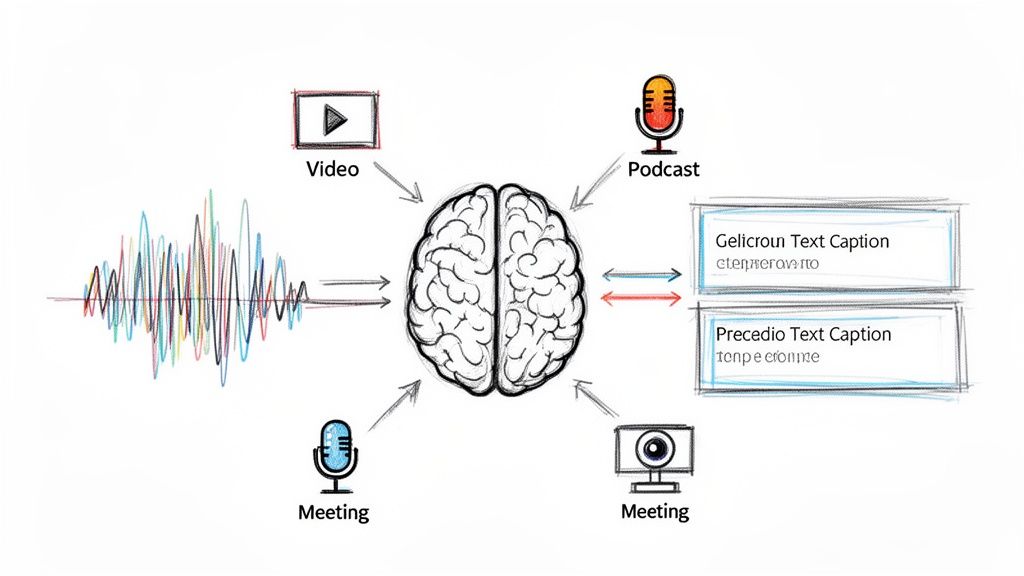

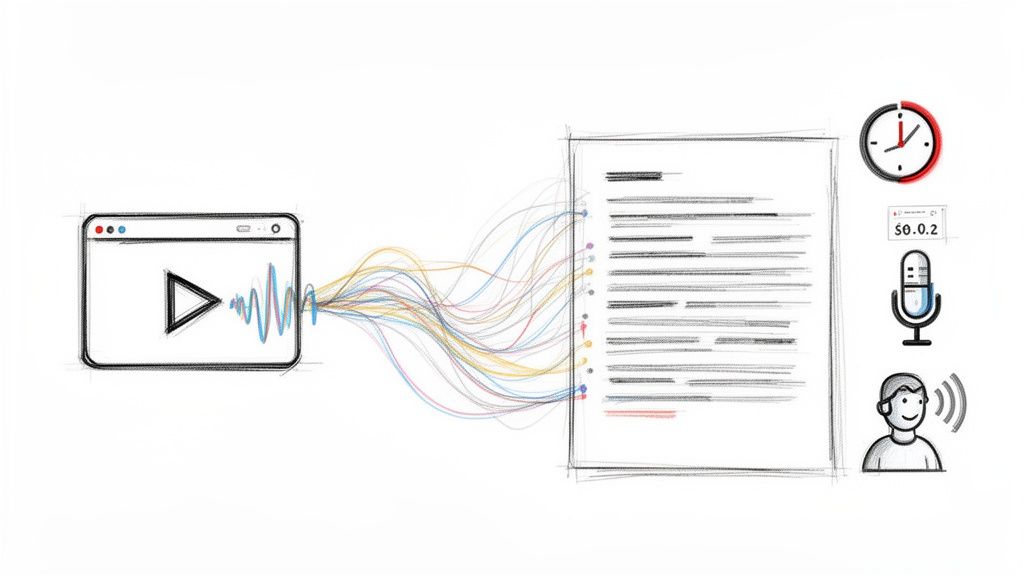

Once the recording is clean, AI can do its job well. That job is more than “listen and type.” Good conference call transcription systems break audio into parts, detect speech patterns, decide who's speaking, and align text back to time in the recording.

What happens under the hood

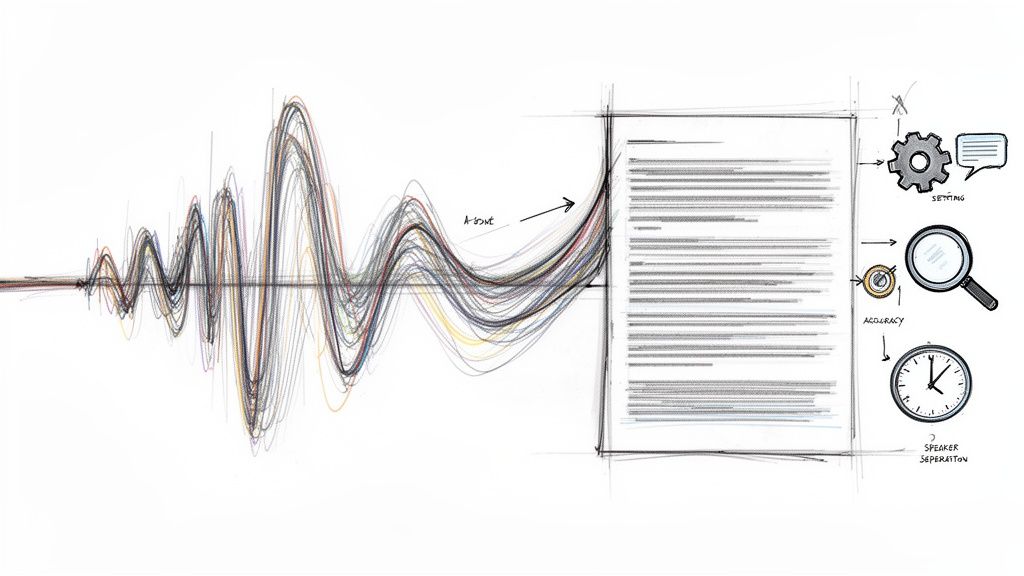

AI models like Whisper typically pre-process audio into 30-second chunks, convert that audio into log-mel spectrograms, and use a transformer-based model to generate text. For calls with multiple speakers, the system applies spectral clustering to assign speaker labels, which is diarization, and this can reach 92% to 96% accuracy on clean audio, according to this breakdown of AI transcription mechanics.

That sounds technical, but the practical takeaway is straightforward. The machine is building a first draft from patterns in your audio. It isn't “hearing” the meeting the way a participant hears it. It's calculating probable text from acoustic signals and context.

That's why your settings matter.

The settings that actually affect results

When you upload a file or connect a recording link, focus on the options that improve the first draft:

| Setting | Why it matters | What to choose |

|---|---|---|

| Language | Wrong language selection hurts recognition early | Pick the spoken language instead of relying on guesswork when you know it |

| Speaker labels | Needed for accountability and review | Turn on diarization for any call with more than one participant |

| Timestamps | Makes verification and clipping easier | Enable them if the transcript will be reviewed or shared |

| Verbatim vs cleaned text | Changes readability and legal usefulness | Use cleaned text for team notes, more literal text for records |

| Custom terms or glossary | Helps with product names and jargon | Add recurring terms when the platform allows it |

A lot of users skip timestamps because they want a cleaner document. That's a mistake. Timestamps are what let you jump back to the exact second where a decision, promise, or disputed statement happened.

Field note: Treat the AI transcript as a strong first pass, not a certified record the moment it appears.

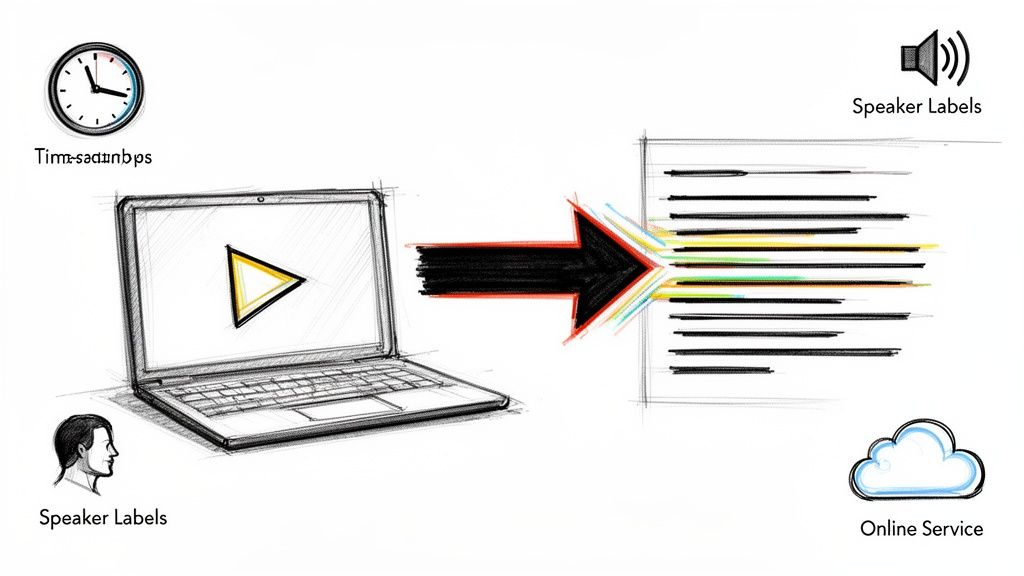

Speaker diarization is where good tools separate themselves

The hardest part of conference call transcription isn't raw word recognition. It's figuring out who said what when multiple people interrupt, agree, laugh, or talk at once.

Diarization gets easier when speakers have distinct voices and take turns. It gets worse when everyone sounds compressed through meeting software, when people overlap, or when accents and domain terms combine in the same sentence. That's why I always tell teams to judge tools on speaker handling, not just headline accuracy.

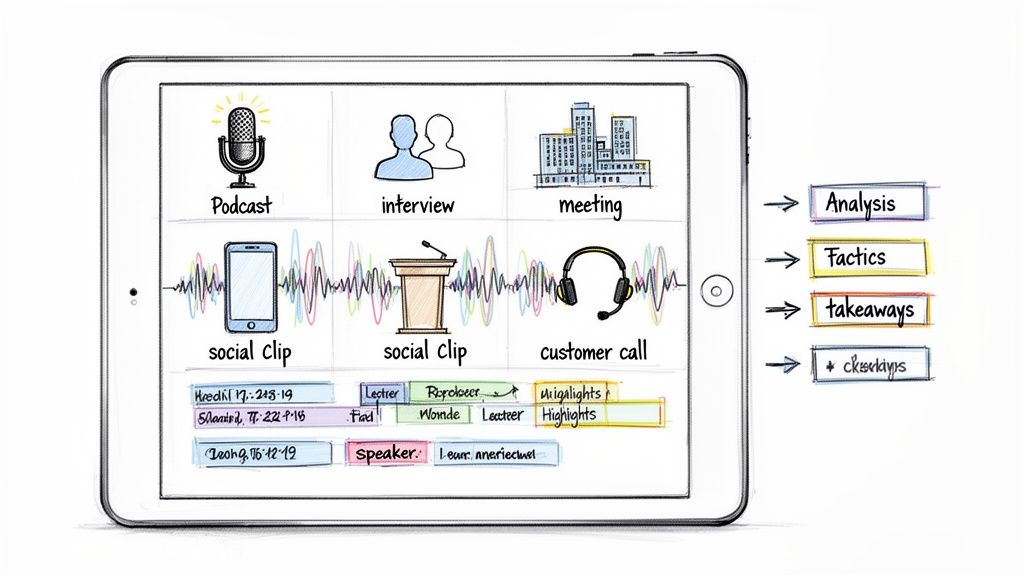

If you work across formats, the same thinking applies outside meetings too. For example, people dealing with short-form content often need a similar workflow for clipping, labeling, and reviewing spoken content. This guide on how to transcribe instagram video is useful because it shows how upload and cleanup decisions affect usability even when the source isn't a conference platform.

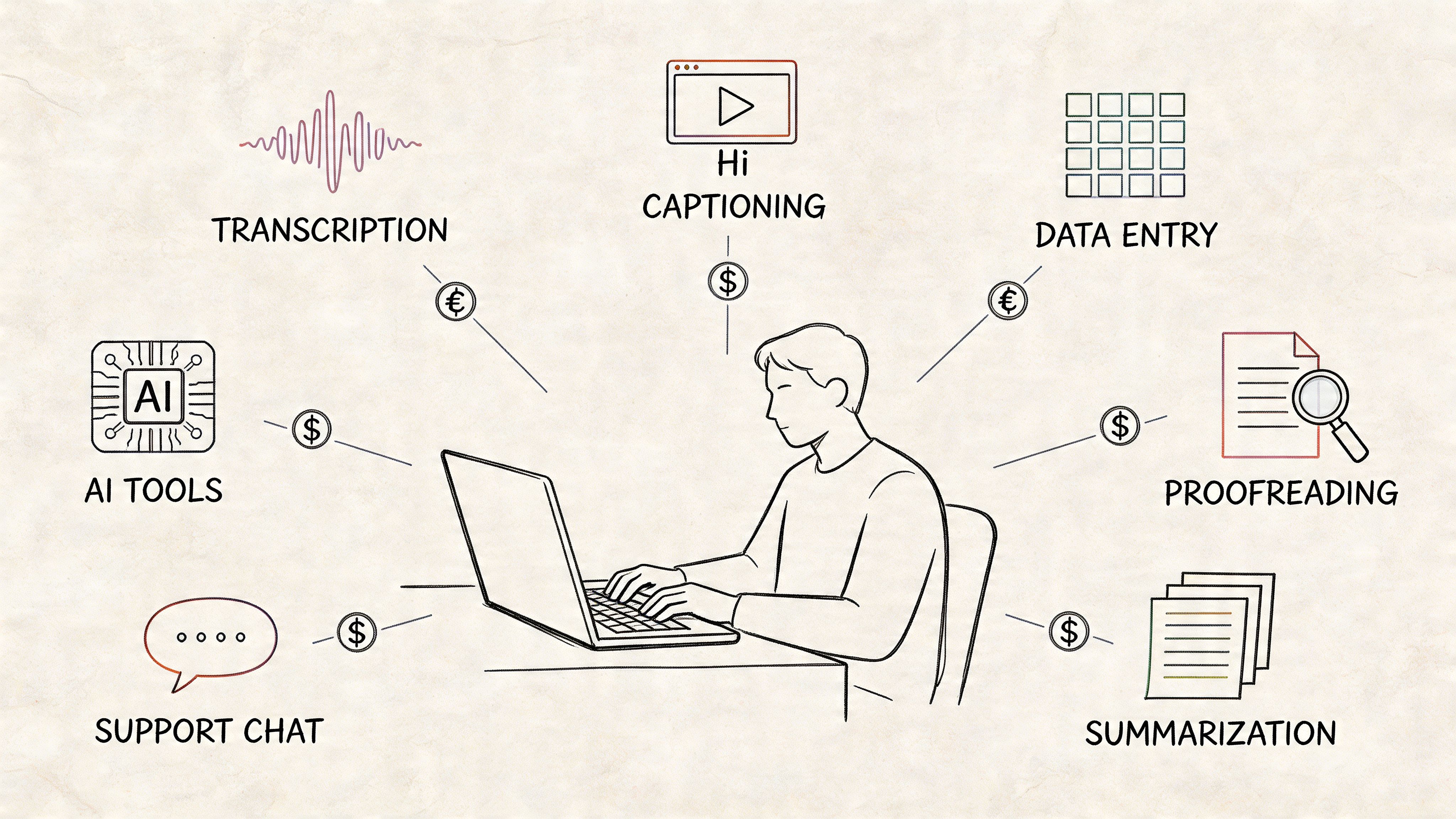

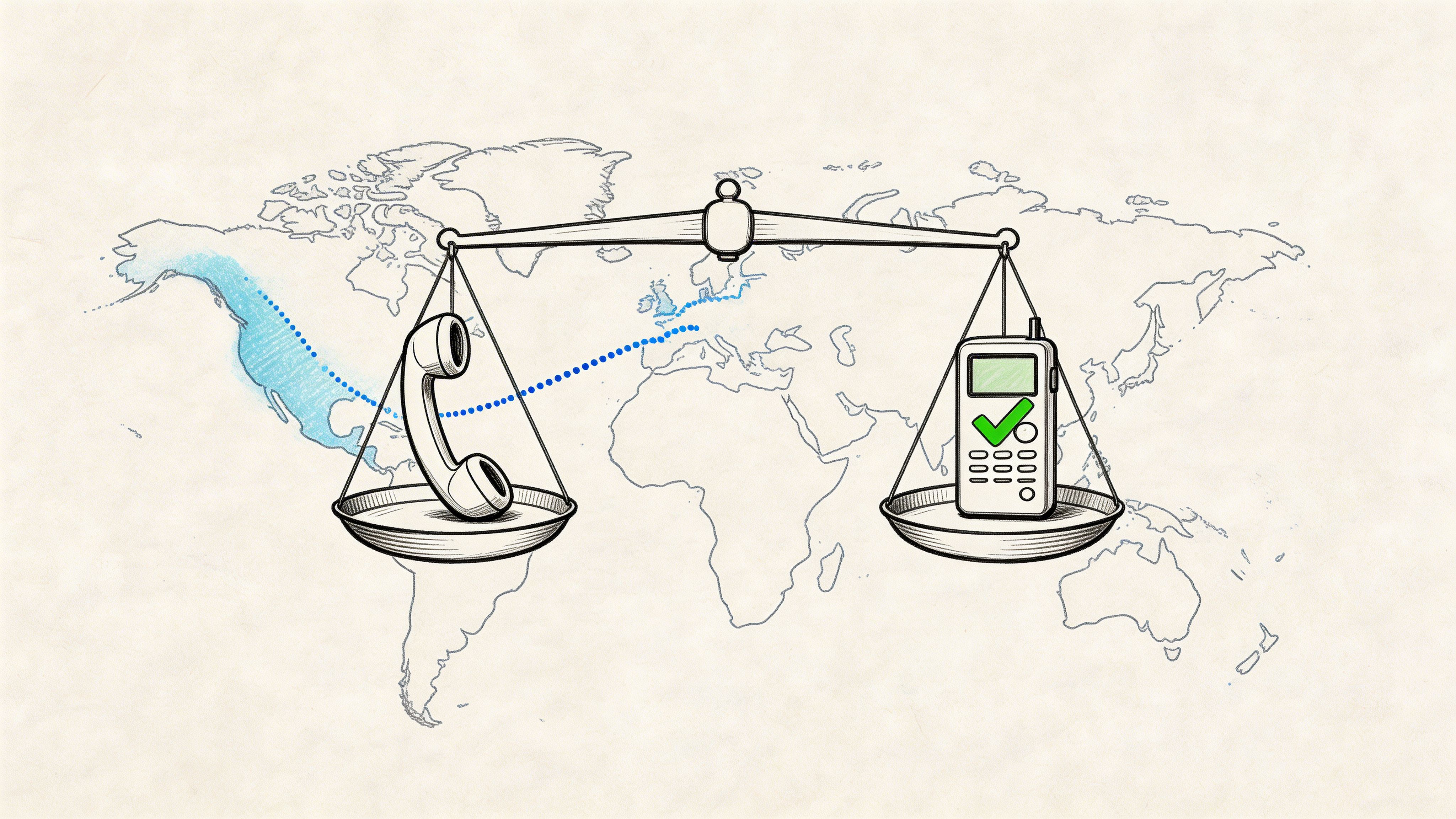

Choose a tool based on workflow, not only output

Different tools fit different teams. Gong is often used in sales environments because it ties transcripts to coaching and CRM workflows. Some teams prefer a direct audio-to-text utility that gives them control over exports and edits. Audio to text AI workflows are a good reference point when you're comparing those approaches.

One practical example is Whisper AI, which can ingest long-form audio, detect speakers, add timestamps, and generate summaries or highlights from the same source file. That kind of setup is useful when the transcript needs to feed follow-up tasks instead of just living as a text file.

The main thing to avoid is choosing a tool based on a polished demo with a single clear speaker. Conference calls are messier than that. Test with your real meetings, your real accents, and your real jargon. That tells you more than any feature grid.

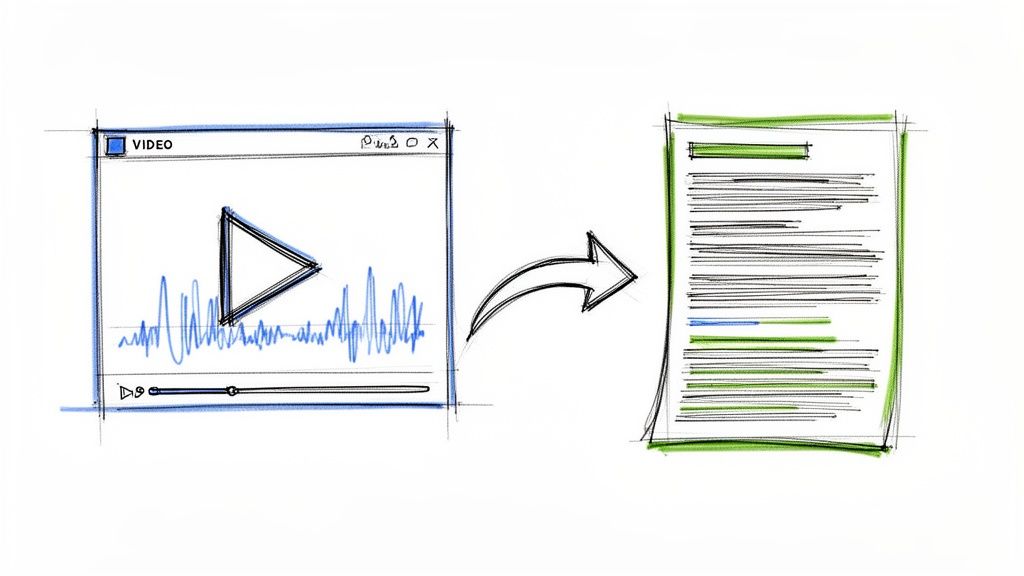

From Raw Text to a Polished, Usable Record

The first transcript is rarely the final transcript. That isn't a failure of the software. It's normal. The mistake is assuming the AI output is ready to distribute without review.

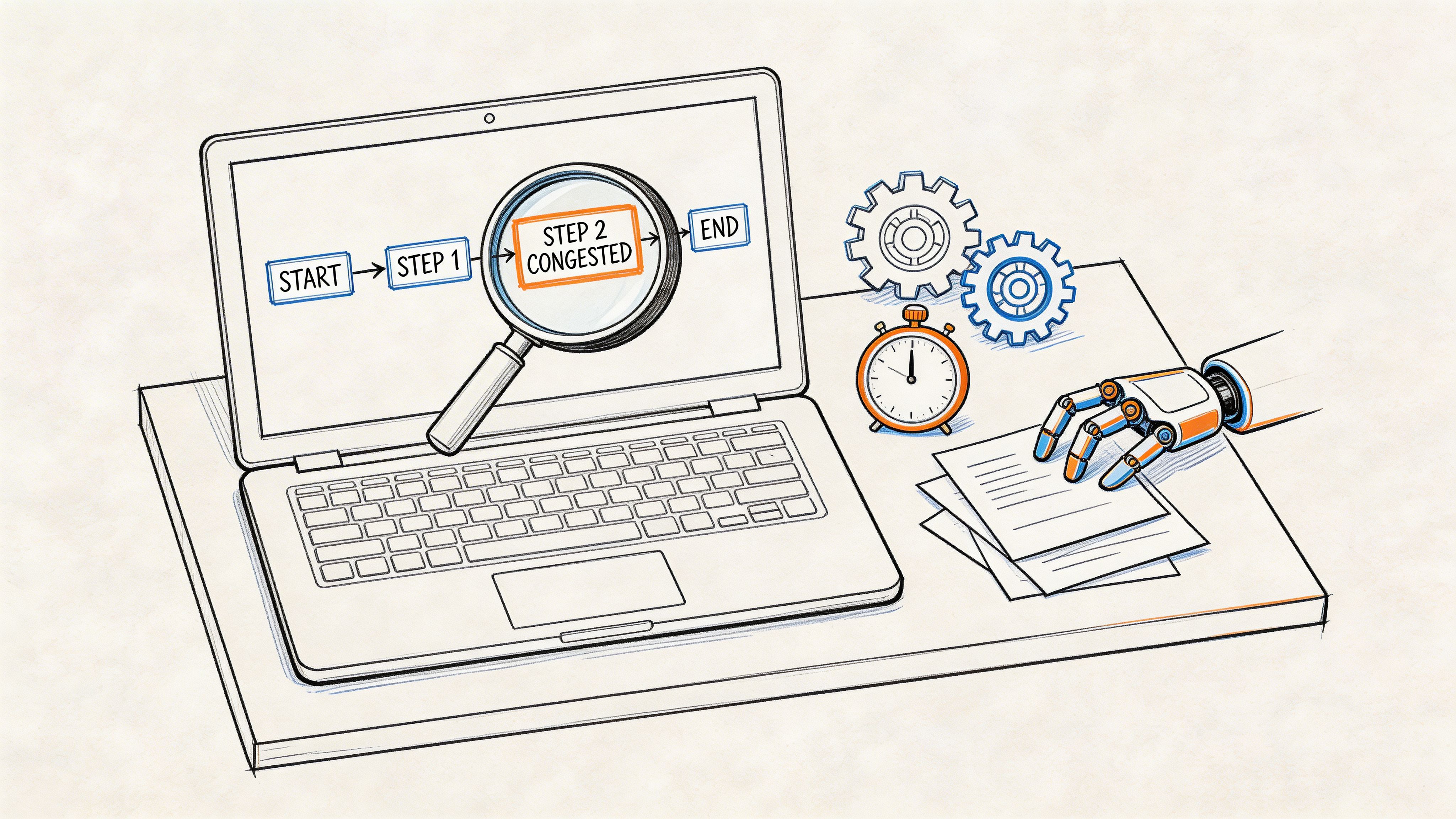

The risk is highest on calls with many participants, non-US speakers, or weak integrations. A 2025 Forrester report notes that 68% of enterprises report less than 80% accuracy in multi-speaker AI transcription for non-US teams, and 42% cite integration failures, which reinforces the need for a human review pass, especially for speaker attribution, as summarized in this discussion of conference call transcription software challenges.

What usually goes wrong

In practice, the errors tend to be predictable.

- Proper nouns get mangled. Product names, client names, and internal project codes often come out wrong.

- Speaker labels drift. One speaker gets mislabeled, then the next few turns inherit the same error.

- Cross-talk collapses meaning. Two short interjections can merge into one confusing sentence.

- Jargon gets normalized into common language. The model picks a familiar word instead of your intended technical term.

If you know those failure modes, editing gets much faster because you stop trying to “proofread everything” and instead check the parts most likely to matter.

Use a review pass with a fixed checklist

Don't edit transcripts like prose. Edit them like records.

A practical checklist looks like this:

- Verify speaker names first. If attribution is wrong, every action item tied to that speaker is suspect.

- Scan decisions next. Confirm that approvals, rejections, and next steps are captured in plain language.

- Correct names and terminology. Search for likely trouble spots such as customer names, product lines, and acronyms.

- Check timestamps around key moments. Especially if the transcript may be used later for audit, compliance, or dispute resolution.

- Remove filler only after accuracy is stable. Clean readability last, not first.

That order matters. People often start by deleting “um” and tightening sentences. It feels productive, but it doesn't improve reliability.

Review the transcript where the meeting had the most friction, not where it sounded smooth.

Decide how polished the final record needs to be

Not every conference call needs the same editing standard. A weekly internal sync can live with light cleanup. A legal, financial, HR, or client escalation call needs a more careful pass.

Use this simple comparison:

| Call type | Editing level | Main focus |

|---|---|---|

| Internal status call | Light | Decisions, owners, deadlines |

| Customer or vendor call | Moderate | Commitments, commercial terms, objections |

| Regulated or sensitive discussion | Heavy | Exact wording, speaker identity, timestamp verification |

That distinction keeps your team from overspending time on low-risk transcripts while still protecting the meetings that need a stronger record.

Clean for use, not for perfection

The goal isn't to produce literary text. The goal is to produce something trustworthy and easy to use.

That usually means:

- fixing names,

- correcting who said what,

- preserving the meaning of decisions,

- and making the transcript readable enough that nobody has to reopen the audio unless they want to verify something specific.

If your team treats editing as the finishing step instead of an annoying exception, conference call transcription becomes dependable. That's the point where people start using transcripts proactively rather than only when something goes wrong.

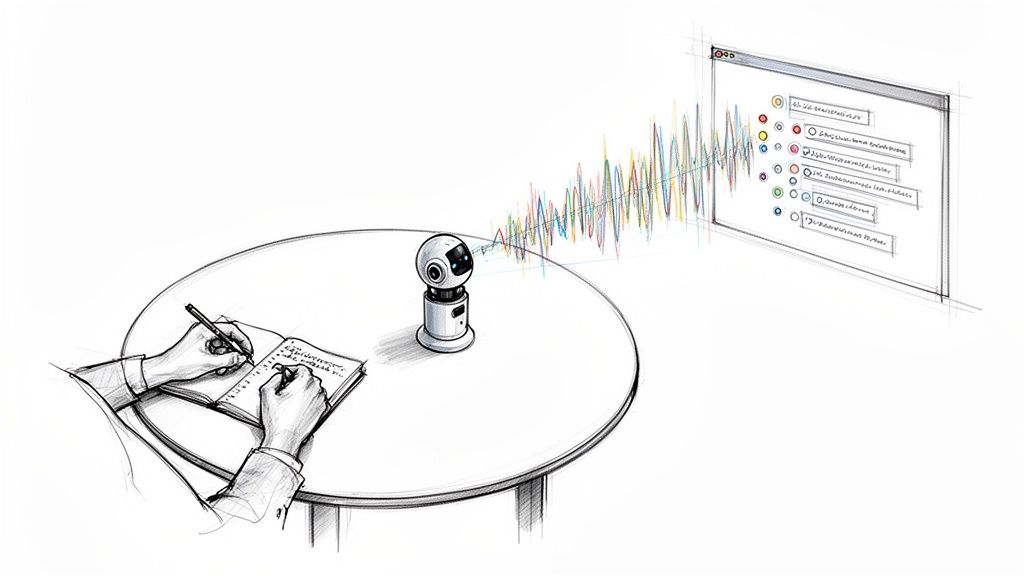

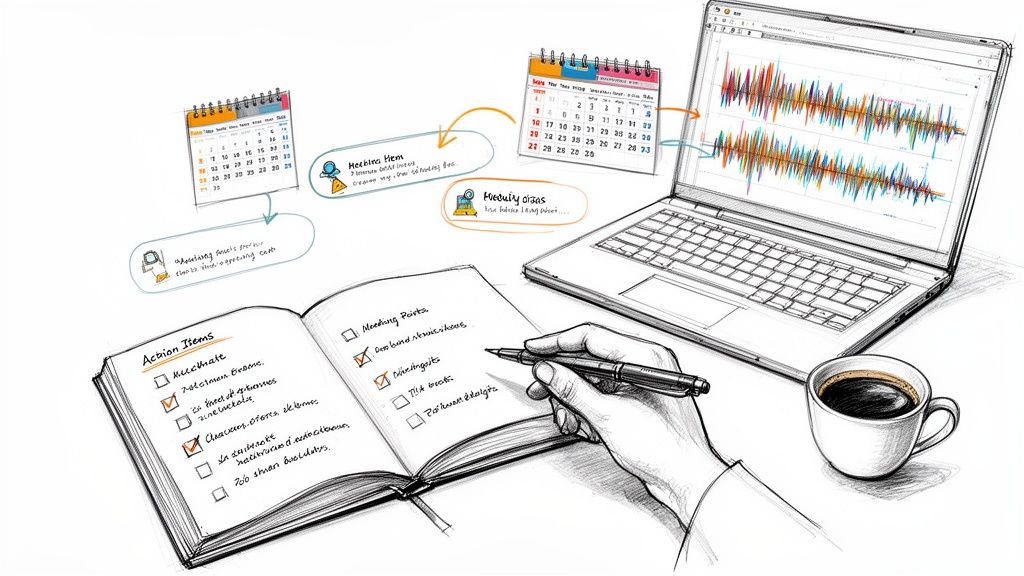

Turn Your Transcript into Actionable Insights

A transcript by itself is just stored potential. The value shows up when someone converts it into outcomes the team can act on.

That's why strong conference call transcription workflows don't stop at text. They produce a summary people will read, action items people can own, and a searchable record people can return to later. Organizations using AI transcription tools report a 25% to 30% increase in meeting productivity, and tools that surface action items and insights help reclaim strategic capacity equivalent to over a month of annual productivity per user, according to automated transcription statistics from Sonix.

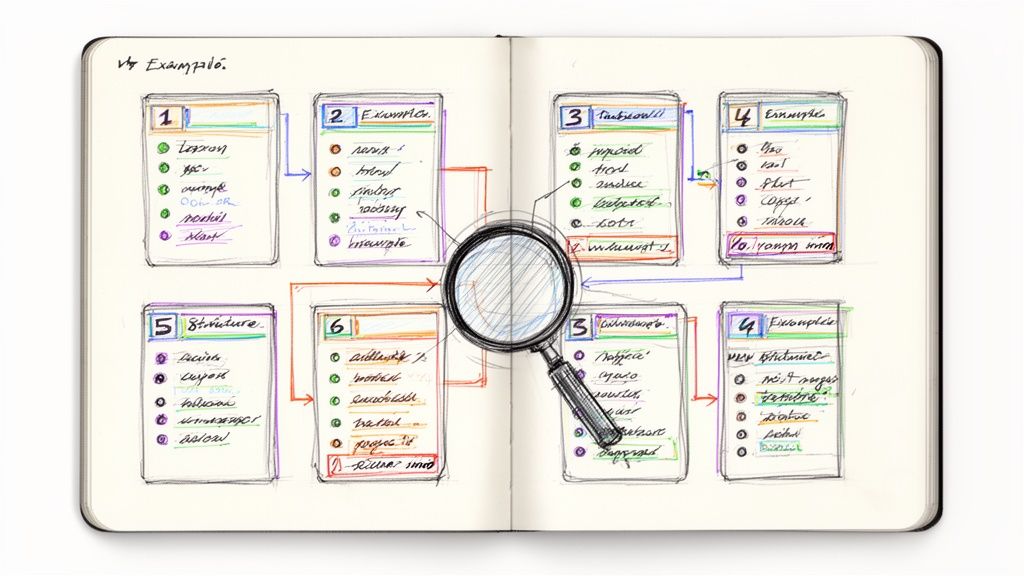

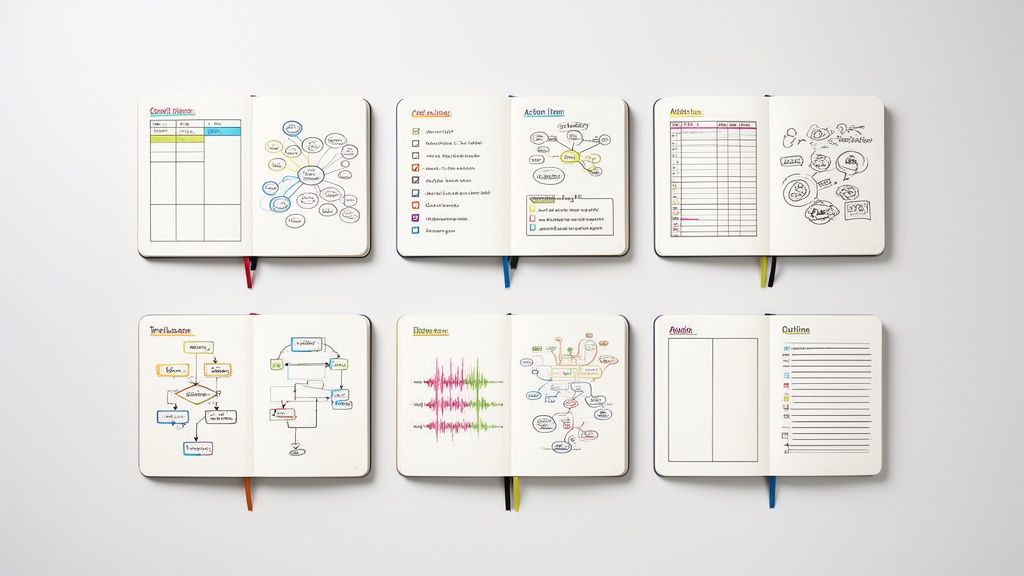

What to extract from every transcript

I've found that teams generally need the same four outputs after a serious call:

- Decision summary: What was agreed, rejected, deferred, or left open.

- Action list: Who owns what next.

- Risk list: Anything blocked, unclear, or likely to cause rework.

- Reference notes: Key context worth preserving for people who missed the call.

If you only create a transcript and archive it, the team still has to do interpretation work later. If you extract these items immediately, the call starts paying for itself.

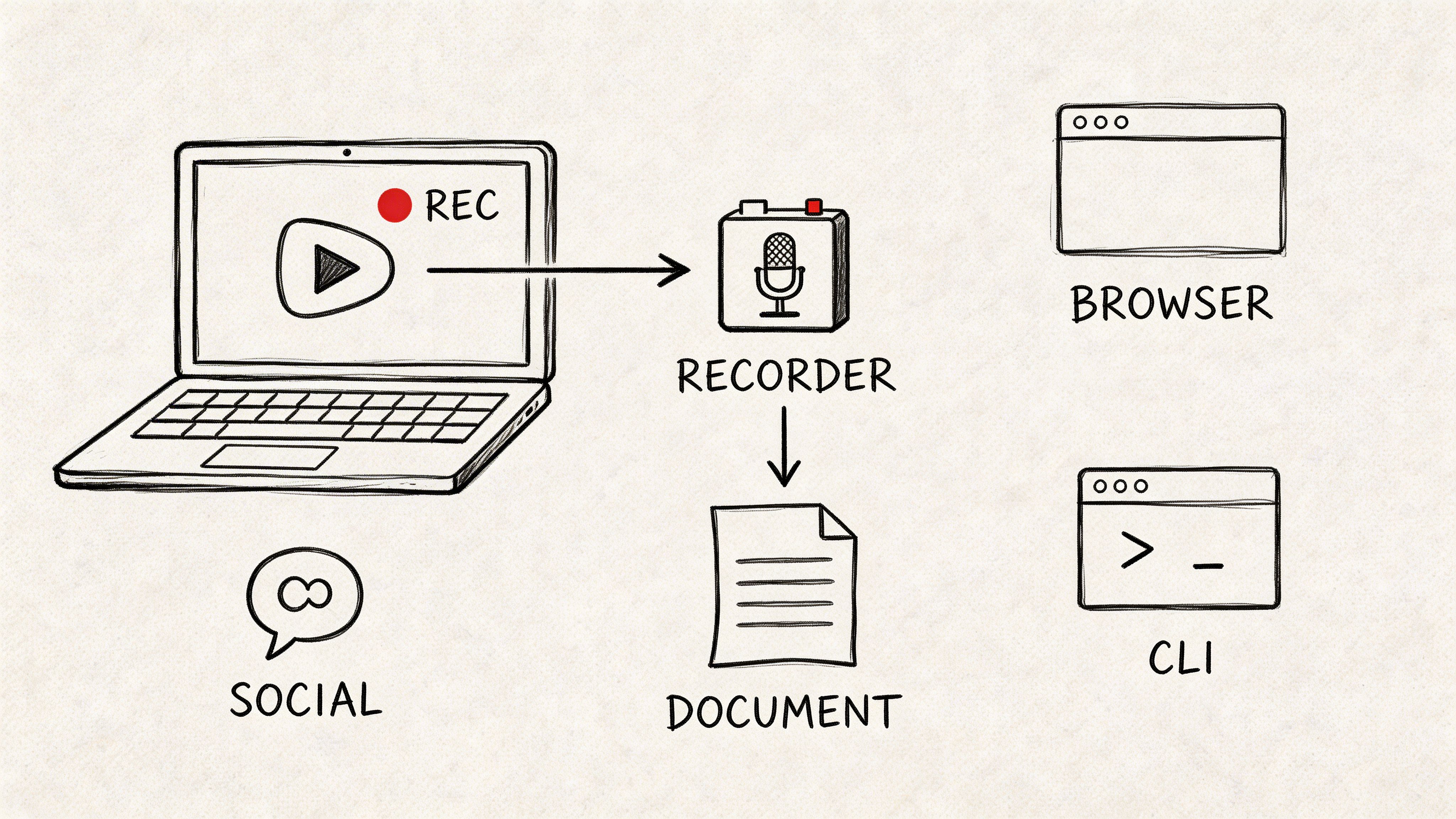

A simple post-call conversion workflow

This is the workflow that holds up in real teams:

- Generate a short summary first. Keep it tight enough that someone can read it in under a minute.

- Pull explicit commitments from the transcript. Look for phrases like “I'll handle,” “we'll send,” “let's approve,” and “we need to confirm.”

- Assign owners in writing. Don't leave action items attached to vague groups like “marketing” or “ops.”

- Flag unresolved points. Hidden disagreement is one of the main reasons people have to revisit call recordings.

- Publish the output where work already happens. Project tools, CRM notes, team docs, or shared channels.

Conference call transcription starts affecting execution instead of just documentation at this stage.

If a transcript doesn't answer “What did we decide?” and “Who does what next?”, it's unfinished.

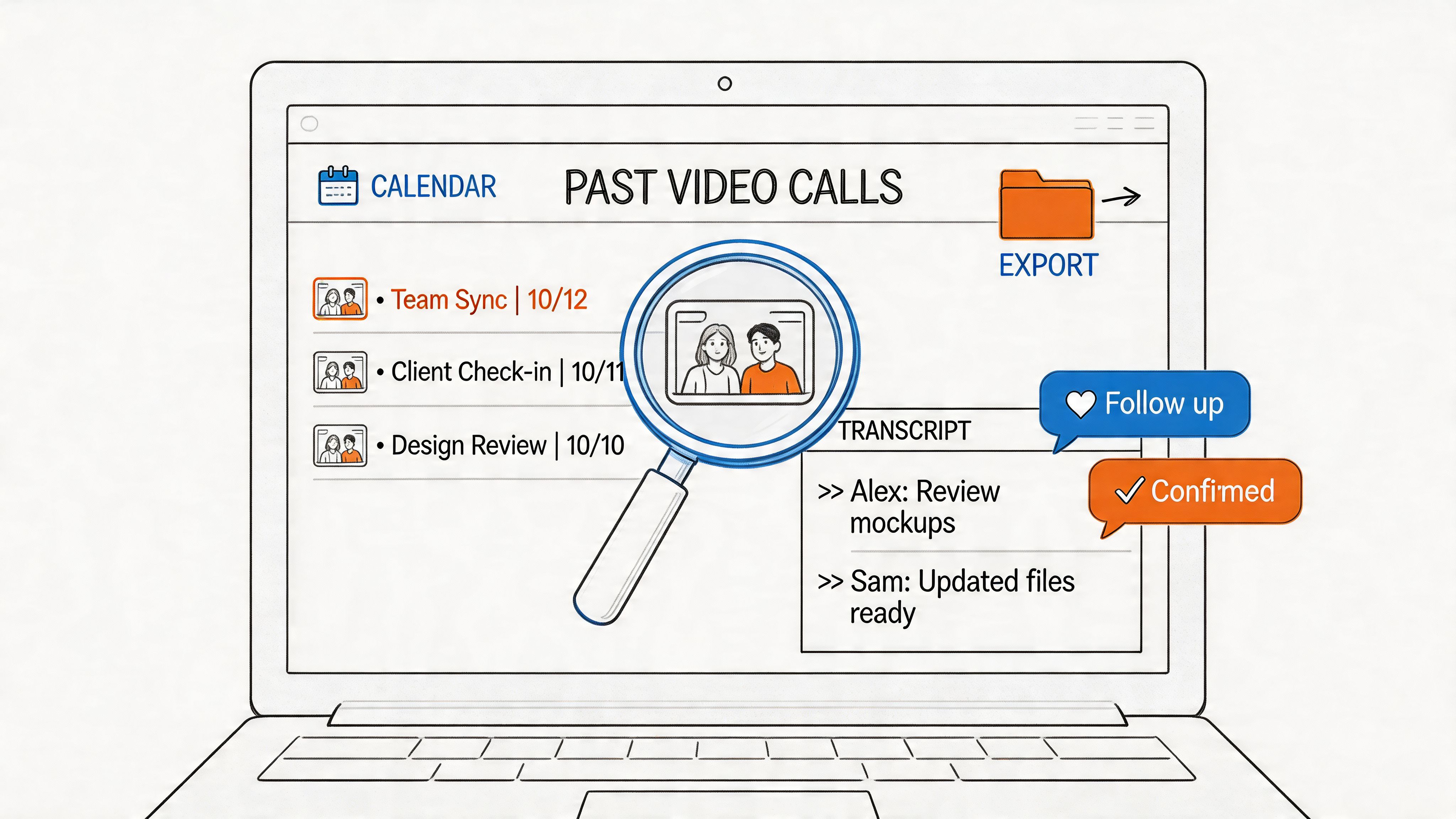

Use transcripts as a reusable knowledge source

Teams often underestimate the long-term value of searchable call history. Once transcripts are organized well, they become a reference library for onboarding, customer context, recurring objections, and internal decision history.

That matters beyond meetings too. If your team also analyzes voice-of-customer material, support calls, interviews, or user research, the same habit of extracting themes and signals applies. A helpful companion resource is BeyondComments' top feedback tools list, especially if you're connecting transcript review with broader feedback analysis work.

The common mistake is treating each conference call as isolated. It usually isn't. Calls repeat patterns. Prospects raise the same concerns. Internal teams revisit the same bottlenecks. Good transcript practices let you see those patterns sooner.

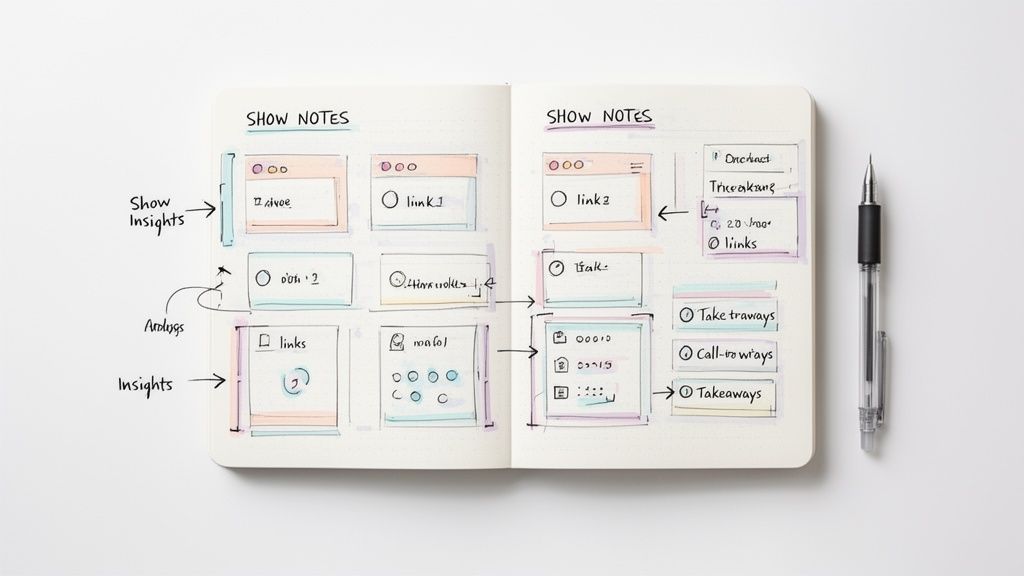

Don't ship raw transcripts to busy people

Most stakeholders won't read a full transcript unless they have to. They will read a sharp summary with clear owners and links back to the source.

So the final deliverable after a conference call should usually be layered:

- a short summary for speed,

- a task list for accountability,

- and the full transcript for verification.

That structure respects how people work. Executives skim. Managers assign. Individual contributors check details when needed. A transcript supports all three, but only if you package it properly.

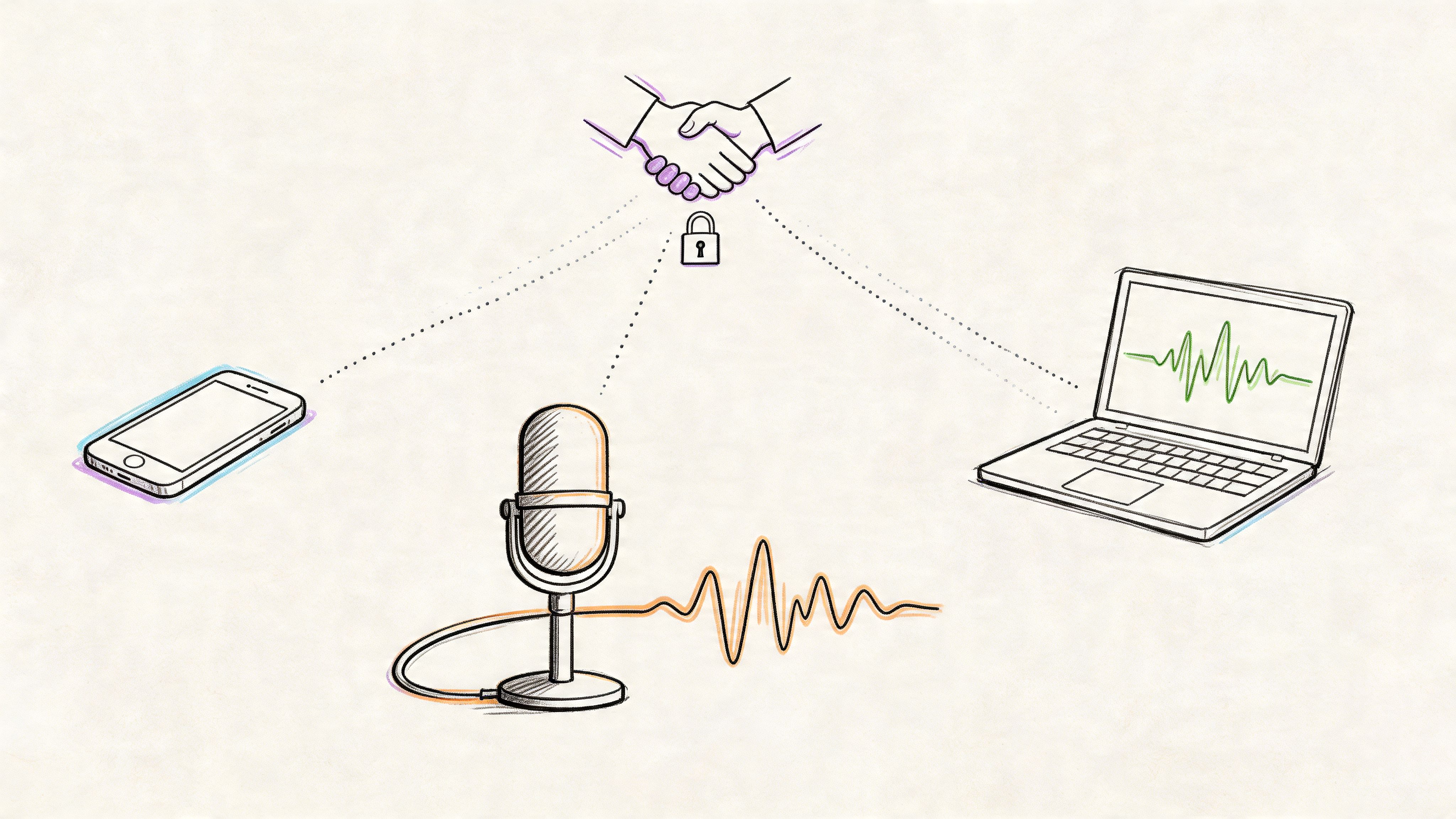

Share and Secure Your Conference Call Data

A transcript only helps if the right people can access it safely. That's where many workflows break down. Teams either overshare sensitive content or lock it away so tightly that nobody uses it.

The better approach is controlled distribution. Export the transcript in the format that matches the job. Word works well when someone needs to edit language. PDF is better when the record shouldn't shift casually. TXT is useful for lightweight storage and automation. Markdown works well when your team keeps notes in docs, wikis, or repositories.

Match the format to the use case

Think in terms of destination, not preference.

- Project management follow-up: Share a cleaned summary and action list in the work tracker.

- Client record: Save a stable version in the account system or deal record.

- Internal knowledge base: Store the transcript with tags that make later retrieval easier.

- Formal documentation: Keep the timestamped version attached to the original recording.

That distribution choice is what turns conference call transcription into a team habit instead of a solo artifact.

Security is part of usability

Sensitive calls often include customer details, financial discussion, personnel issues, or health-related information. If people don't trust the workflow, they'll stop using it or start creating side-channel workarounds.

Compliance features are essential in this context. Teams often look for vendors that support encryption and recognized controls such as SOC 2 Type II, especially when transcripts move through business systems that store confidential information. If your calls involve healthcare-related data, it also helps to understand adjacent security expectations. A practical example is this overview of Affordable Pentesting HIPAA services, which gives useful context for how organizations validate security around systems handling sensitive information.

Build simple access rules

You don't need an elaborate policy to improve safety. You need a clear one.

Use a few defaults:

- Limit raw transcript access to people who need the full record.

- Share summaries more broadly when the details are sensitive but outcomes need visibility.

- Store exports in approved systems instead of personal drives or ad hoc chat threads.

- Keep the original audio linked when verification may be necessary.

A trustworthy conference call transcription process is one people can repeat without worrying that convenience is creating risk. That's what makes it scalable.

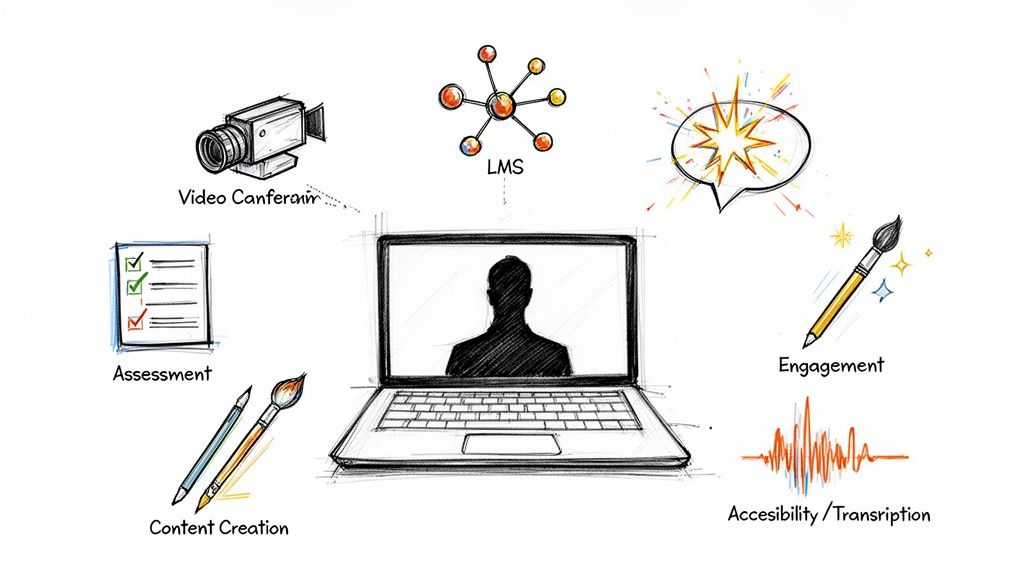

If you want a simpler way to run that full workflow, Whisper AI can help. It transcribes conference calls, detects speakers, adds timestamps, generates summaries and highlights, and lets you export the result in formats your team can use. It fits best when you want one system for turning recordings into searchable, shareable records instead of juggling separate tools for transcription, cleanup, and follow-up.