What Are Language Models and How Do They Work?

At its core, a language model is a specialized AI that's been trained to understand and create human language. From my experience working with these tools, the best way to think of it is as a super-powered autocomplete that has read a massive portion of the internet. These models are the engines running behind the scenes of many AI tools you likely already use, from your search bar to modern transcription services.

What Are Language Models and Why Do They Matter

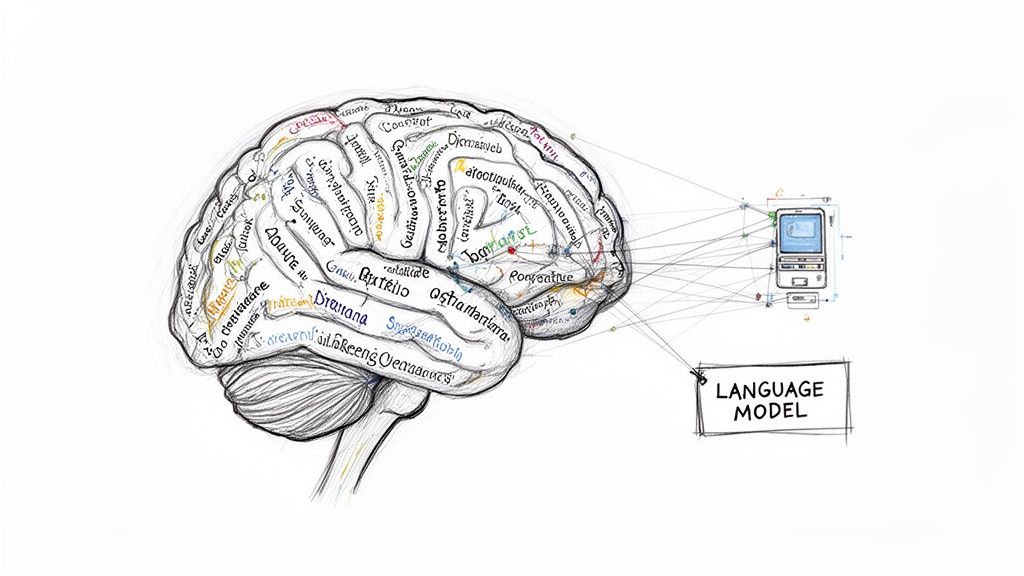

A language model is an AI that learns to process, understand, and generate text that feels human. It achieves this by analyzing enormous collections of text data—think countless books, articles, and websites—to learn the patterns, grammar, and subtle rules of how we communicate. This massive training exercise teaches it to predict the next word in a sentence, which is the foundational skill for all sorts of language-related jobs.

Simply put, these models are the powerhouse behind many of the smart tools professionals rely on today. They go far beyond just recognizing keywords; they're designed to figure out the actual meaning behind the words you use. This marks a major shift in how we interact with our devices, making them feel more like collaborative partners than simple machines. To see the most advanced versions of this technology, it’s worth understanding the specifics of what is a large language model, which are the biggest and most capable versions available today.

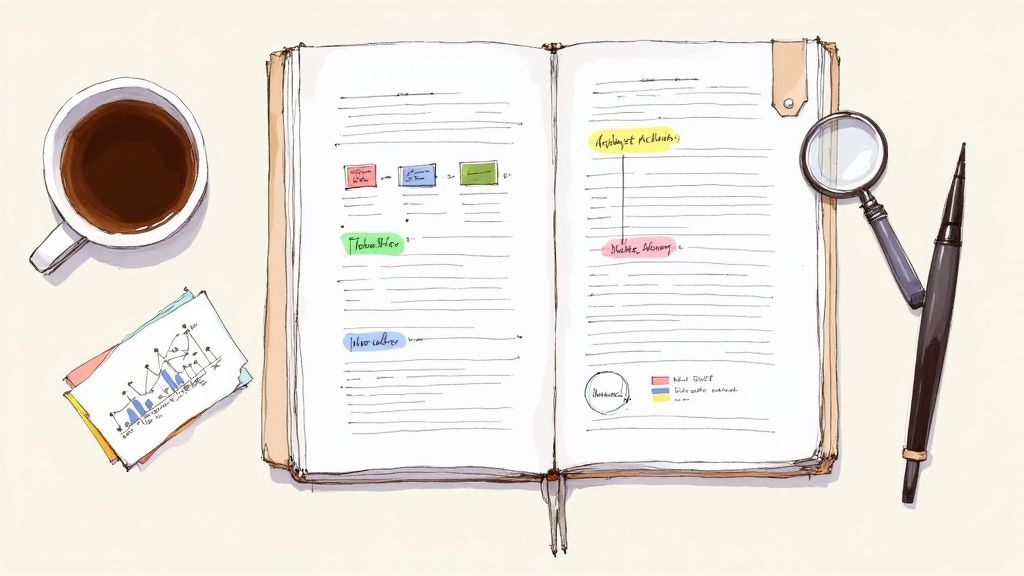

For a quick overview, this table breaks down what these models do and the value they bring to the table based on my direct experience using them.

Language Models At a Glance

Ultimately, a language model translates the messy, unstructured nature of human language into something a computer can work with, unlocking practical solutions for everyday professional tasks.

The Real-World Impact on Your Work

The buzz around language models isn't just hype; it’s about real-world efficiency and unlocking new ways of working. I've found they take on tedious tasks and open up creative avenues that were once impractical. For anyone who works with words or information, these tools are quickly becoming essential.

So, what makes them so important for professionals?

- Productivity Boost: They can transcribe a multi-hour meeting in minutes, boil down a dense report into a few bullet points, or draft emails, freeing up your time for more strategic work.

- Clearer Communication: By helping you fine-tune your writing, spot grammatical errors, or even translate between languages, these models ensure your message lands exactly as intended.

- Deeper Insights: They can comb through thousands of customer reviews or search massive archives to spot trends and pull out key information that a person could easily miss.

The real power of a language model is its ability to manage the complexity of human language on a massive scale. It understands context, infers meaning, and produces coherent text, turning raw information into a valuable, organized resource.

These models are a key component of a wider field called Natural Language Processing. If you're curious about the science that makes all this possible, our guide on what is Natural Language Processing offers a great starting point.

From Simple Rules to Intelligent AI

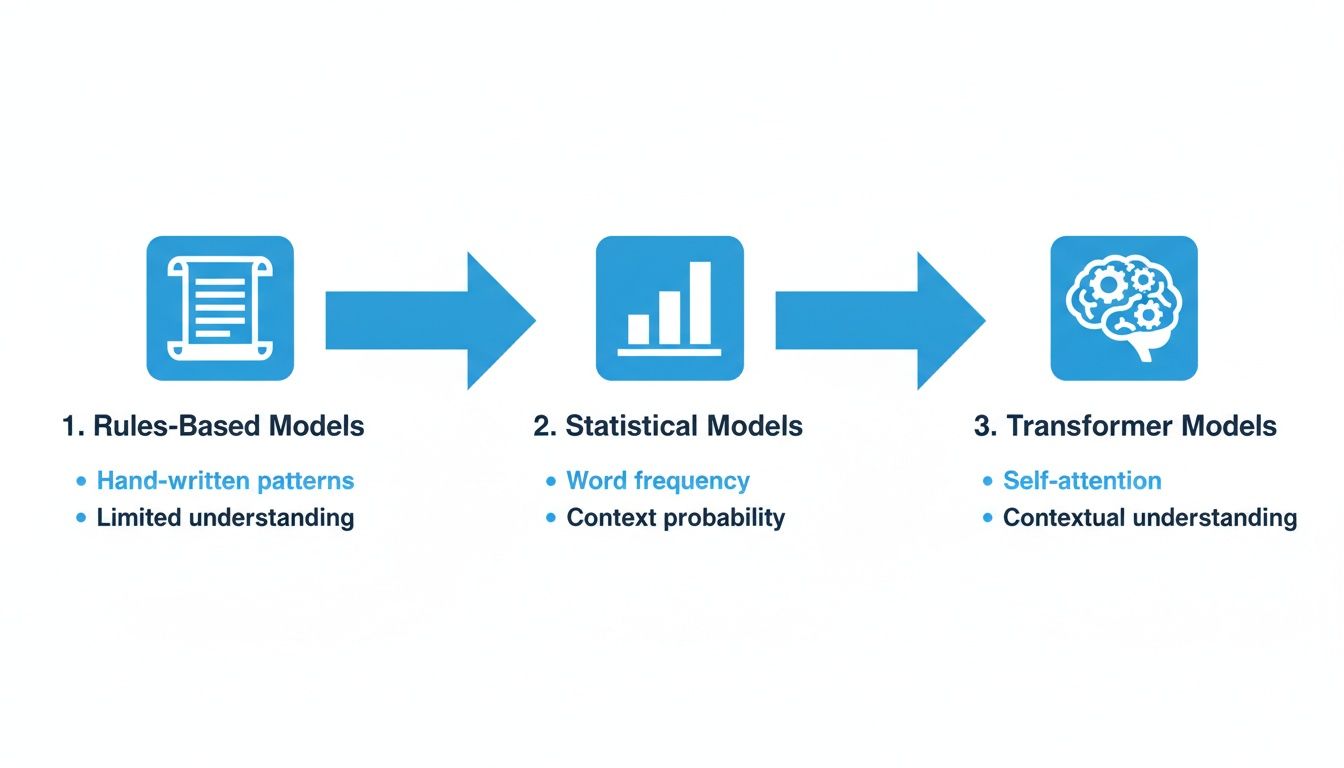

The journey to today’s sophisticated language models wasn't a sudden leap; it's a story that has unfolded over decades. The earliest attempts to get computers to understand language were built on a foundation of simple, hard-coded rules. These systems were incredibly rigid, only able to handle very specific tasks they were explicitly programmed for—not unlike a basic calculator that only knows the few operations it was built to perform.

This rule-based approach had its first big moment back in the mid-20th century. In 1954, an experiment by Georgetown University and IBM showed a machine translating 60 Russian sentences into English. It was a landmark event, but the system itself was quite primitive, running on just 6 grammatical rules and a tiny 250-word vocabulary. Still, it was proof of concept. It showed that, in theory, a machine could process human language. You can explore the full history of these early developments and see how they paved the way for everything that followed.

The real problem with these early models was their inability to learn. They couldn't adapt to new words, slang, or the endless exceptions that make language so messy and human. Every single rule had to be painstakingly written by a person.

A Shift Toward Learning from Data

The next major breakthrough came when researchers started using statistical models. Instead of feeding computers a list of rules, they fed them massive amounts of text and let the models figure out the patterns themselves. By analyzing which words frequently appear together, they could start predicting the next word in a sentence based purely on probability. This was a huge step. For the first time, models were learning from real-world language, not a rigid, pre-programmed script.

This new statistical approach gave rise to a few key model types that pushed the field forward:

- N-gram Models: These were fairly simple. They predict the next word by looking at the previous "n" words. For example, a 2-gram (or bigram) model that sees "thank you" might predict "very" simply because "thank you very" is a common statistical pairing in the text it was trained on.

- Recurrent Neural Networks (RNNs): A significant improvement, RNNs introduced a concept of "memory." They could hold on to information from earlier in a sentence, which allowed them to understand much more complex grammar and context.

- Long Short-Term Memory (LSTM): LSTMs were a special type of RNN designed to fix a major flaw. Early RNNs tended to "forget" what happened at the beginning of a long sentence by the time they reached the end. LSTMs were far better at remembering information over longer stretches of text, making them much more powerful.

The Rise of Neural Networks

These statistical methods set the stage for the powerful neural networks we have today. Inspired by the interconnected structure of the human brain, neural networks are phenomenal at recognizing incredibly subtle and complex patterns in language. They don't just count how often words appear together; they learn the deep, underlying relationships and contexts that actually give our words meaning.

This evolution—from simple rules to data-driven learning—is what truly separates modern AI from its ancestors. It’s the difference between a machine that just follows a strict set of commands and one that can understand, adapt, and even generate nuanced human communication. This is the foundation that today's most impressive language tools are built on.

How a Language Model Learns to 'Think'

So, how does a language model go from a digital blank slate to something that can write an email or summarize a dense report? The process is a lot like training a very, very dedicated apprentice, but the library it studies is a massive slice of the internet.

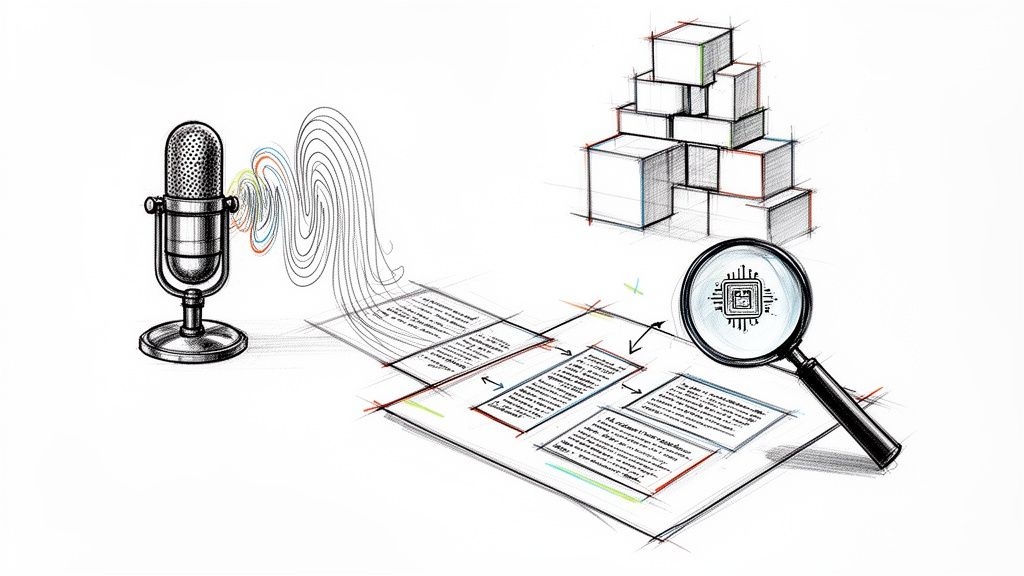

This gigantic collection of text gets broken down into smaller units called tokens. Think of tokens as the basic building blocks of language—they can be whole words like “hello,” parts of words like “-ing,” or even just a comma. For instance, the simple phrase "what are language models" would likely be split into four tokens: ["what", "are", "language", "models"].

During its training, the model has one primary objective: to predict the next token in a sequence. After being shown the tokens ["what", "are", "language"], its job is to figure out that "models" is a highly probable next token. It does this over and over, billions of times, and in the process, it starts to absorb the intricate patterns, grammar, and contextual nuances of human language.

The Power of Paying Attention

For a long time, a major hurdle for language models was keeping track of context over long stretches of text. Earlier models had a short memory; they might forget the beginning of a paragraph by the time they got to the end. This all changed in 2017 with the introduction of the Transformer architecture.

Transformers brought a groundbreaking concept to the table: the attention mechanism. This allows the model to weigh the importance of different words when it's trying to make a prediction. It can essentially "pay attention" to the most relevant parts of the input, no matter where they appear in the text.

For example, consider the sentence: "The robot picked up the red ball because it was the closest." An attention mechanism helps the model correctly determine that "it" refers back to the "ball," not the "robot." This ability to link related ideas across a sentence was a huge breakthrough.

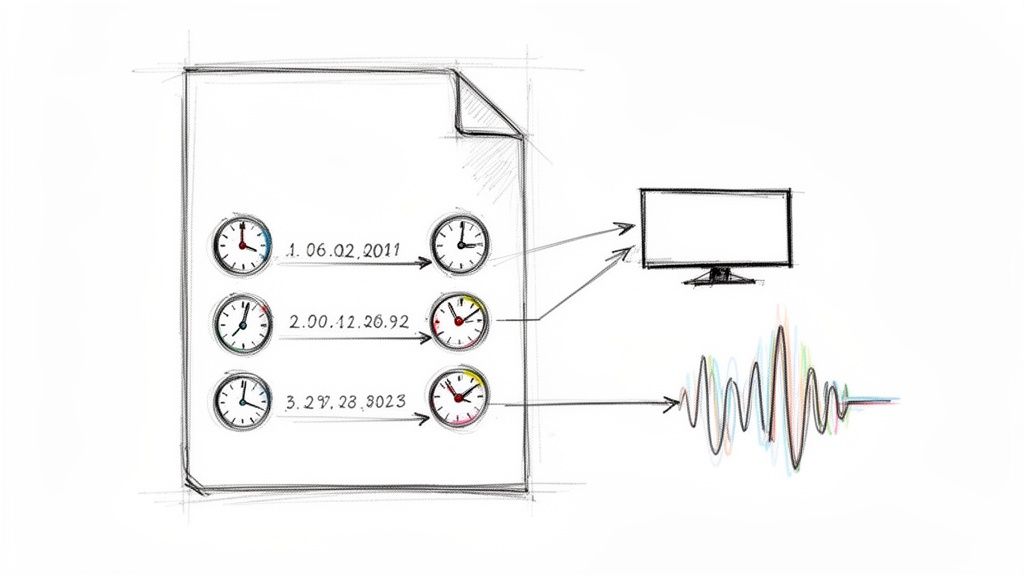

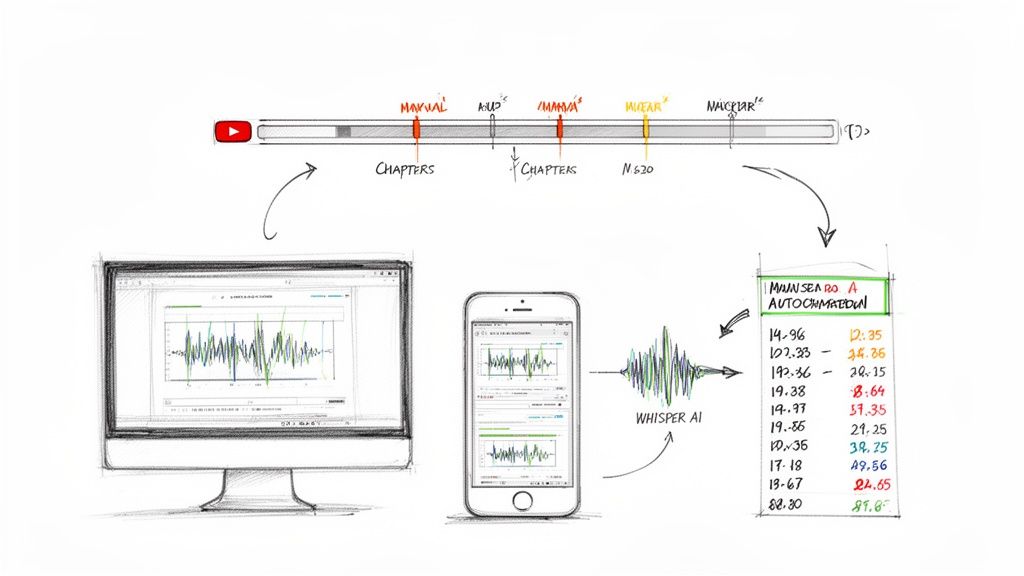

This chart shows how language models have evolved from simple, rigid systems to the sophisticated Transformer models we see today.

As you can see, the journey moves from basic rules to statistical probabilities, and finally to the deep contextual understanding that defines modern AI.

Building Understanding, Brick by Brick

This intense training process is what gives a language model its ability to "think." It's not conscious thought like a human's, but rather an incredibly advanced form of pattern matching. The model builds its knowledge layer by layer:

- Statistical Relationships: It learns that "hot" is often found near "coffee," but rarely near "ice cream."

- Grammatical Structure: It internalizes the rules of sentence construction, like how a noun is typically followed by a verb.

- Contextual Meaning: It figures out that the word "bank" has a different meaning in "river bank" compared to "a deposit in the bank."

Through this massive, data-driven learning, the model constructs a complex internal map of language. This is why it can do far more than just predict the next word. It can summarize, translate, and generate entire paragraphs because it has learned the underlying logic of how words connect to form meaning.

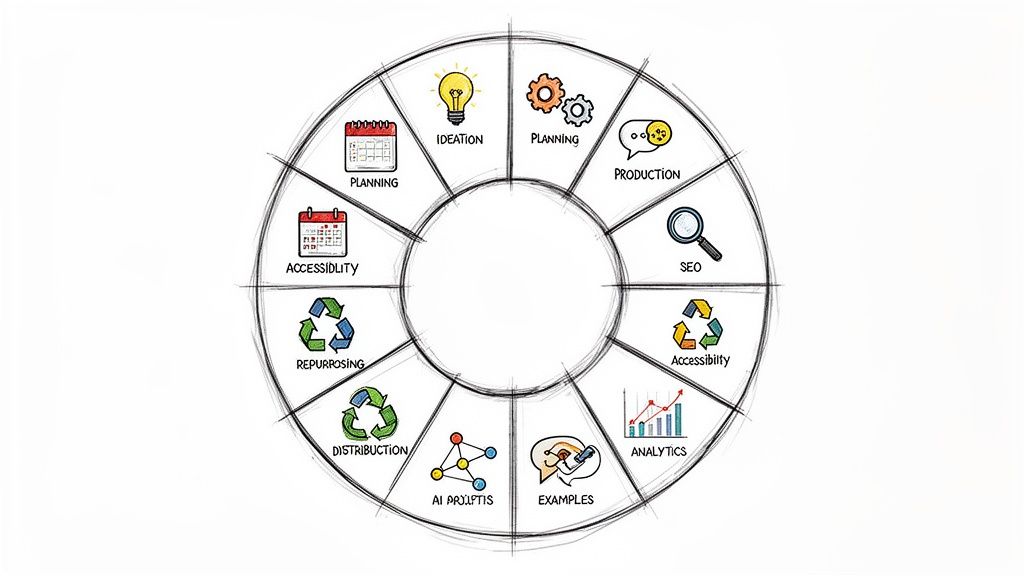

A Tour Through the Different Types of Language Models

Not all language models are built the same. Over the years, we've seen a few major shifts in how we teach machines to understand language. Each new approach brought its own strengths to the table, and knowing the differences helps explain why some AI tools are great for simple predictions while others can write entire articles.

Think of it as an evolution. We started by teaching machines simple statistical tricks, then gave them a form of memory, and finally arrived at a design that lets them grasp the big picture.

From Statistical Guesses to Neural Memory

The earliest language models were purely statistical, with N-grams being the most common. An N-gram is really just a simple pattern-matcher. For example, a 2-gram (or bigram) model predicts the next word by looking at only the one word that came before it. If it sees "thank you," it might guess the next word is "very" simply because "thank you very" is a common pairing in its data. It's a blunt instrument, effective for basic tasks but with zero real understanding of what a sentence actually means.

Things got more interesting with the arrival of Recurrent Neural Networks (RNNs). These models introduced a concept of memory. An RNN processes a sentence one word at a time, but it can "remember" what it saw earlier in the sequence. This was a huge step forward, allowing models to handle more complex grammar. A popular type of RNN, called a Long Short-Term Memory (LSTM) network, was even better at holding onto context over longer stretches of text.

This was like upgrading from a parrot that can only repeat common phrases to an assistant who can actually remember the topic of conversation from a few sentences ago. That shift was fundamental to getting machines to genuinely comprehend language.

Transformer Models: The Current Gold Standard

The real game-changer came in 2017 with the introduction of the Transformer architecture. Transformers did away with processing text word-by-word. Instead, they developed a way to look at all the words in a sentence at once, weighing the importance of each word in relation to every other word.

This is the magic behind the incredible contextual understanding we see in models like BERT and GPT. It’s how they can pick up on subtle relationships between words, even if they’re far apart in a paragraph. Today, nearly every advanced AI tool—from a search engine deciphering a complex question to an AI assistant drafting a professional email—has a Transformer model working under the hood.

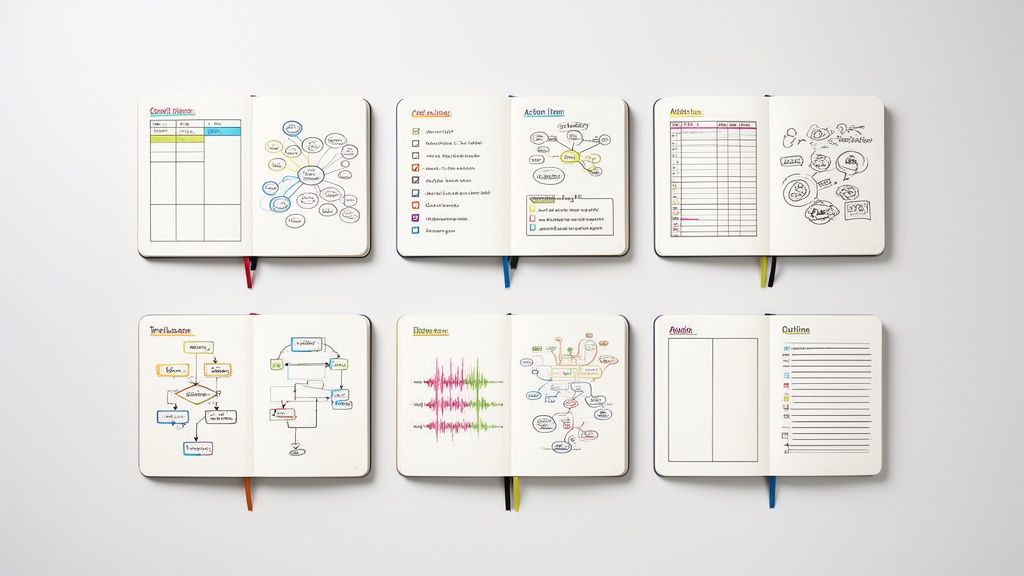

To put these advancements into perspective, here's a quick breakdown of how these architectures stack up against one another.

Comparison of Language Model Architectures

As you can see, each model represents a significant leap in a machine's ability to handle the complexity and nuance of human language.

Defining Large Language Models (LLMs)

This brings us to Large Language Models (LLMs). An LLM isn't a totally new architecture; it's a Transformer model scaled to a colossal size. We're talking about models trained on mind-boggling amounts of data, with billions—sometimes trillions—of parameters. This massive scale is what unlocks their surprising abilities to reason, write creatively, and tackle complex instructions.

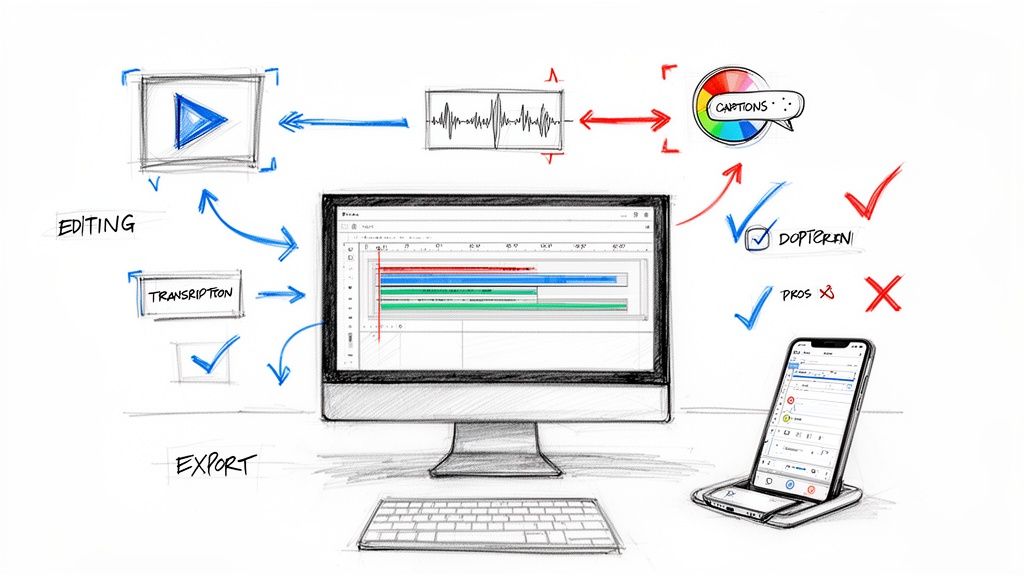

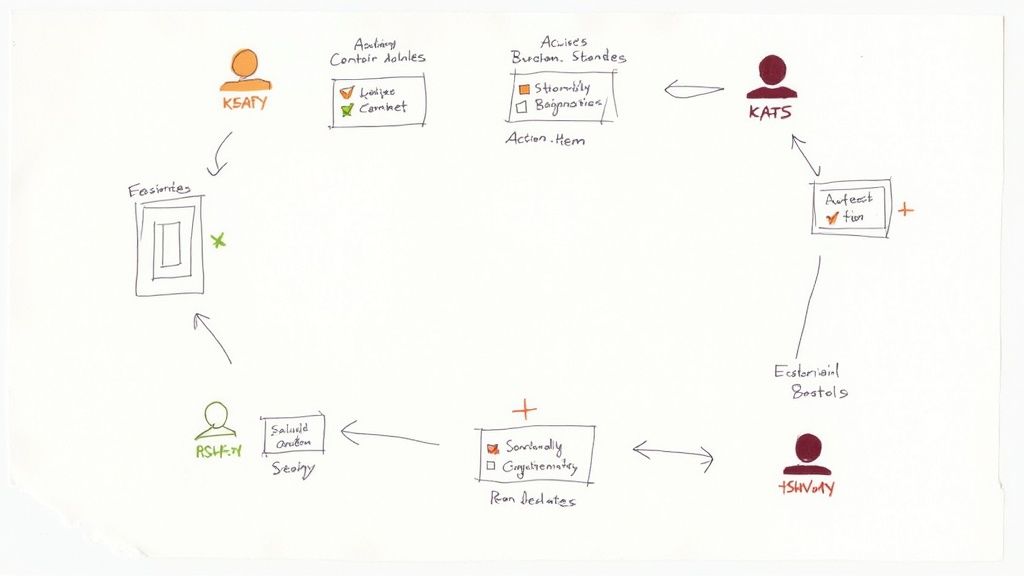

Tools like the ones behind Whisper AI are a perfect example, where these powerful models are used to deliver exceptionally accurate audio transcriptions and generate insightful summaries from spoken content.

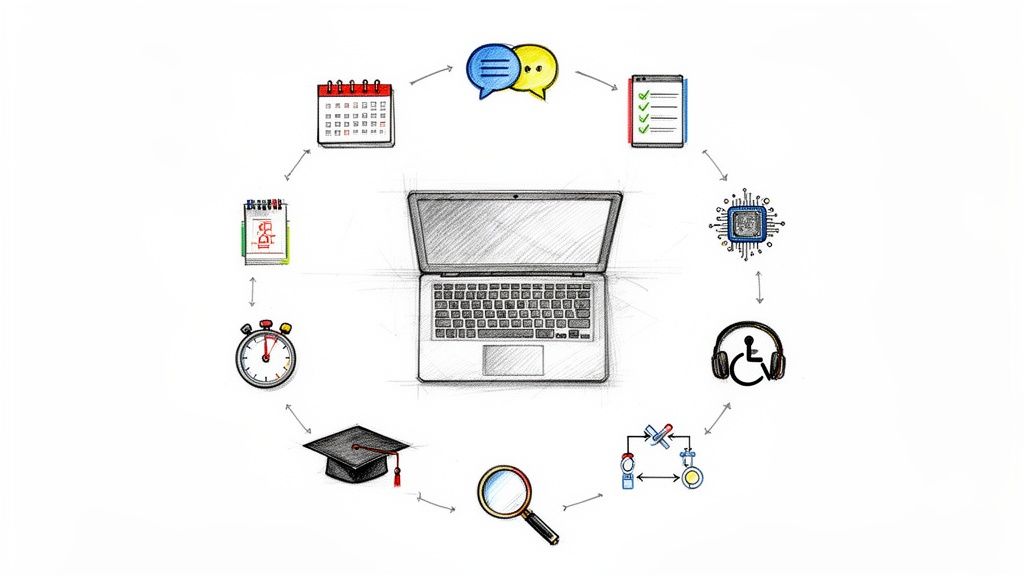

Practical Applications in Your Daily Workflow

The theory behind language models is fascinating, but where they really shine is in the real world. Let's move past the abstract concepts and look at how these models are powering tools that solve actual, everyday problems at work. The goal is simple: let the AI handle the time-consuming language tasks so you can focus on strategy and high-level thinking.

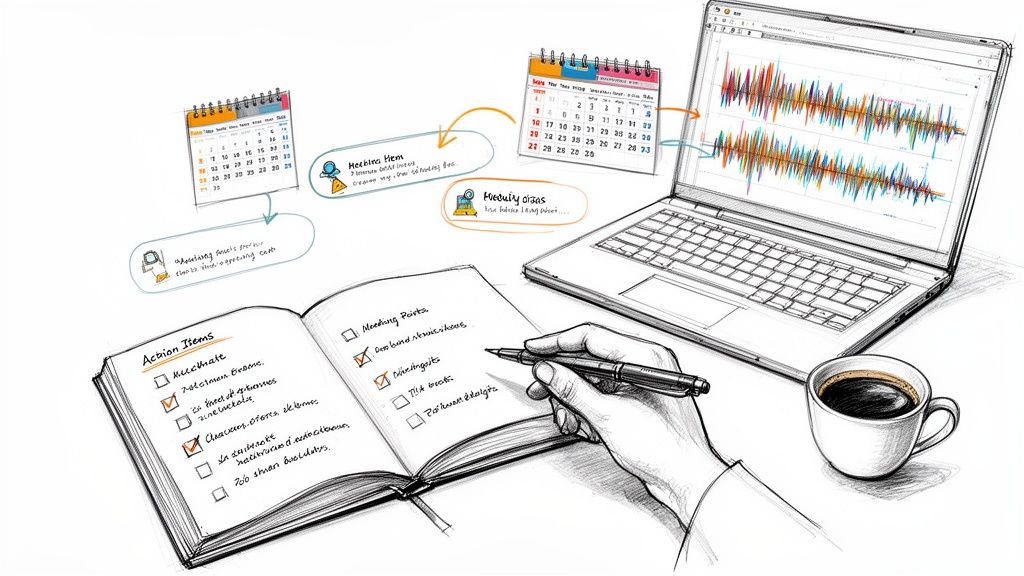

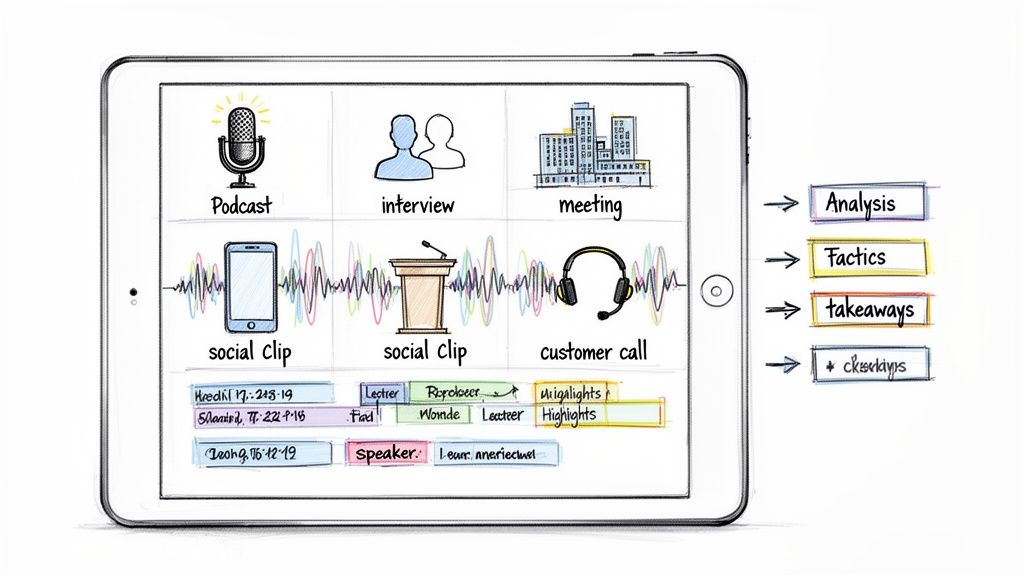

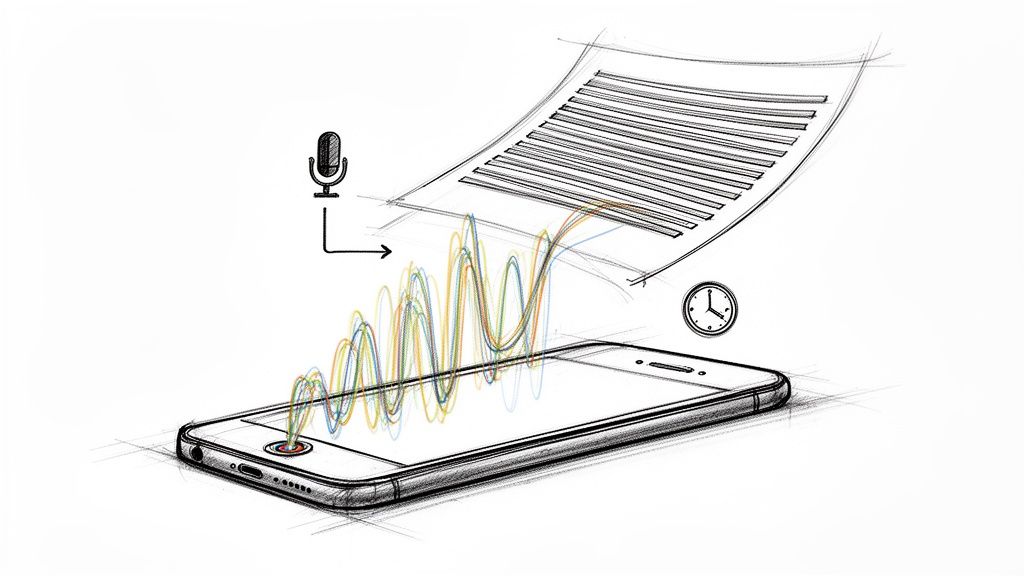

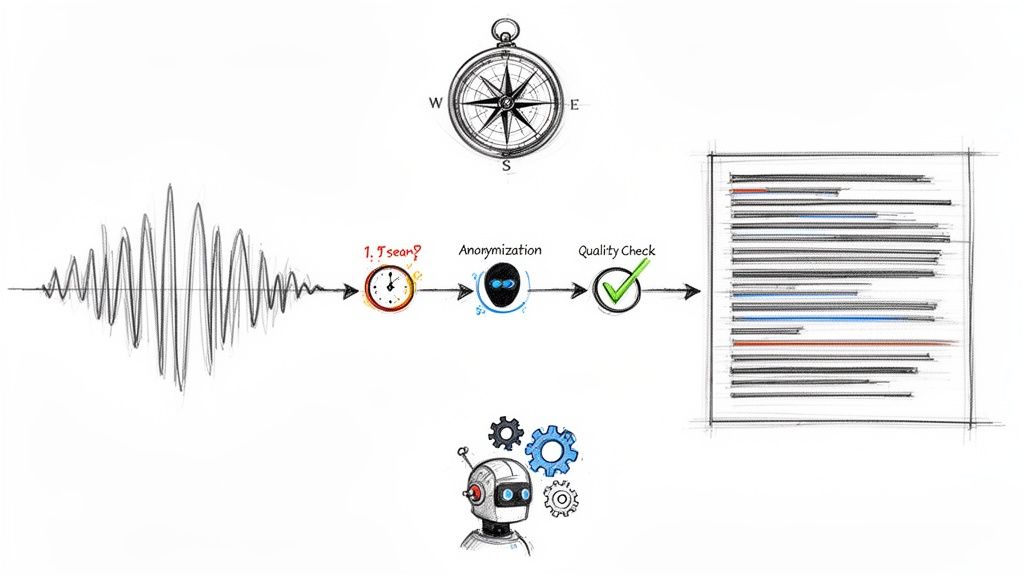

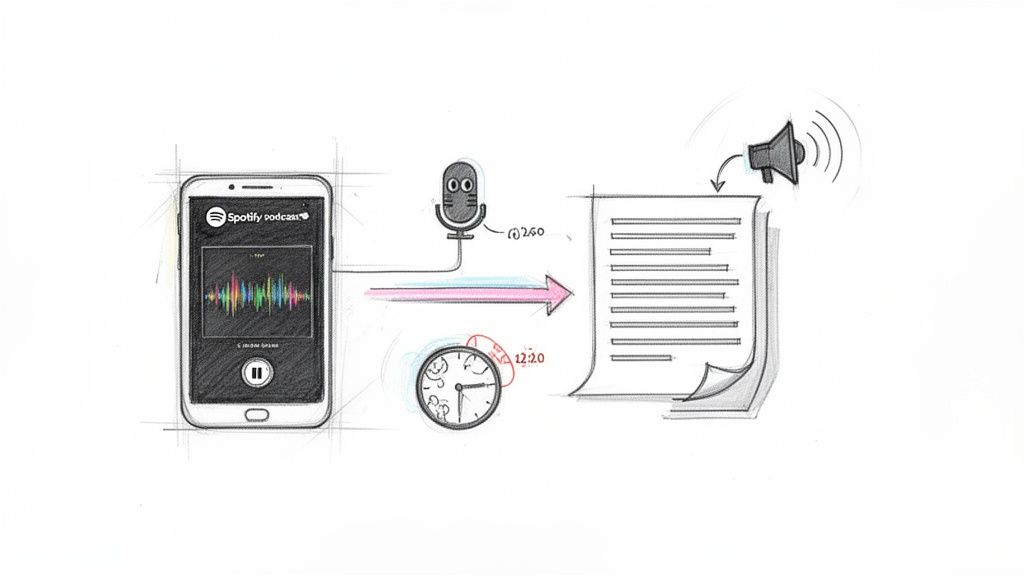

Think about transcribing audio. From my own experience, I know a one-hour interview can easily eat up several hours of tedious typing. An AI transcription service, built on a sophisticated language model, can turn that same audio into an accurate, searchable document in just a few minutes. That's a direct and massive boost in efficiency.

Automating Content Creation and Management

Beyond just converting speech to text, language models are fundamentally changing how we create and manage content. They can take a simple idea and spin it into all sorts of written material, helping you get past that dreaded blank page and speed up your entire workflow.

Here are a few ways I've personally put them to use:

- Drafting Content: Stuck on a social media post, a blog outline, or a tricky email? A language model can give you a solid first draft in seconds. All you have to do is refine and personalize it.

- Summarizing Information: Have a 50-page report or a dense article you need to understand quickly? These tools can boil it all down to a concise summary or a clean list of bullet points, saving you hours of reading.

- Repurposing Media: Turn a podcast episode into a blog post, pull key quotes from a video for social media, or generate show notes from a webinar. It's all about getting more mileage out of the content you already have.

The real game-changer is the ability to grasp intent. Modern search engines don't just match keywords anymore. They use language models to figure out what you're really looking for, which is why the results are so much more relevant and helpful these days.

Unlocking New Creative and Analytical Possibilities

The applications don't stop with text. Language models are also being blended with other creative and analytical tools, opening up new ways to communicate and find insights that were once incredibly difficult to uncover.

For example, an AI meme generator is a fun but powerful illustration of this. The underlying language model understands the context of an image and the text you want, then creates a meme that's actually relevant and funny. It's a creative task, powered by AI.

This same idea applies to serious analysis. A company can feed thousands of customer reviews into a language model to instantly spot common themes, track sentiment, and identify customer pain points. Imagine how long that would take a human team. Our guide on using speech-to-text AI dives deeper into how this tech is used to gain these kinds of customer insights.

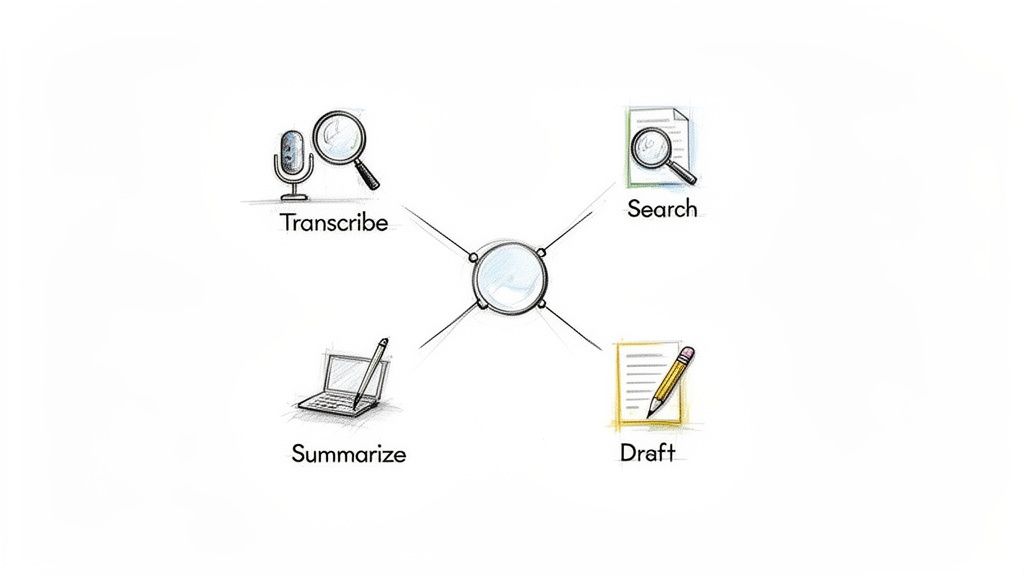

Once you truly understand what are language models, you start seeing opportunities everywhere to use them. They're powerful assistants for transcribing, summarizing, drafting, and analyzing—turning slow, manual tasks into fast, automated processes.

Understanding the Limitations and Future of AI Language

Language models are genuinely impressive, but they aren't magic. To get the most out of them, and to use them responsibly, we have to be honest about where they fall short. Thinking of them as powerful but flawed tools helps us become smarter, more critical users.

One of the most talked-about problems is what's known as AI "hallucinations." This is when a model confidently spits out information that sounds completely reasonable but is actually wrong or just made up. Since the AI's goal is just to predict the next logical-sounding word, it can easily create convincing sentences that are completely detached from reality. There’s no internal fact-checker; it will state fiction with the same authority as fact.

Another massive hurdle is inherent bias. These models are trained on a staggering amount of text from the internet, and the internet, as we know, is full of human biases, stereotypes, and bad information. The AI learns from all of it, and its outputs can reflect and even amplify those harmful ideas, leading to skewed or unfair results. Researchers are actively working on ways to filter out this bias and build more neutral models, but it's a tough, ongoing challenge.

The core issue is that a model doesn't "know" what is true or false; it only knows what is statistically probable based on its training data. This distinction is key to understanding both its power and its pitfalls.

Navigating the Ethical and Security Landscape

Beyond just getting things right, powerful language models bring up some serious ethical and security questions. These aren't just technical glitches to be patched; they're societal issues that demand careful consideration from all of us.

- Misinformation: The ability to generate realistic-looking text on a massive scale is a dream for anyone looking to spread fake news or propaganda.

- Authenticity: When AI can write just as well as a human, how do we know who—or what—created something? It complicates our ideas about authorship and originality.

- Security: Bad actors are already exploring how to use these models for sophisticated phishing scams, social engineering, and other malicious activities.

But it’s not all doom and gloom. These challenges are at the forefront of AI research. Developers are constantly experimenting with new ways to make models more truthful, reduce their biases, and build in better safety features. The goal isn't just to make AI more powerful, but to create systems that are safer, more transparent, and better aligned with human values.

For now, the best thing you can do as a user is to stay aware of these limitations. That’s the first step to using this incredible technology wisely.

Got Questions? We’ve Got Answers.

As we wrap up, it's natural to still have some questions floating around. Let's tackle a few of the most common ones to help bring everything into focus.

What’s the Real Difference Between AI, Machine Learning, and Language Models?

It helps to think of them like a set of nesting dolls, one inside the other.

- Artificial Intelligence (AI) is the biggest, outermost doll. This is the whole, sprawling field dedicated to making machines smart enough to perform tasks that typically require human intelligence.

- Machine Learning (ML) is the next doll inside. ML is a specific branch of AI where, instead of being programmed for every single step, a machine learns and improves on its own by analyzing huge amounts of data.

- Language Models are the smallest, innermost doll. They are a specialized type of machine learning that focuses exclusively on one thing: understanding, processing, and generating human language.

Can a Language Model Actually Understand Emotions or Context?

This is a great question. Language models don't "feel" emotions like we do. They don't have consciousness or personal experiences.

What they are incredibly good at, however, is recognizing patterns. They've been trained on billions of examples of human writing, so they've learned to associate certain words, phrases, and sentence structures with specific emotions like joy, frustration, or sarcasm. It's a highly sophisticated form of pattern matching, not genuine emotional understanding.

How Can I Start Using Language Models in My Work Today?

Chances are, you already are. If you’ve used Google Search, gotten a suggestion from Grammarly, or seen your email client finish your sentence, you've interacted with a language model.

To be more intentional about it, start by looking at your own daily tasks. Is there a repetitive, language-based chore you'd love to offload? You could explore AI-powered writing assistants, automated transcription services like Whisper AI, or even chatbot platforms. The key is to find a specific problem and look for a tool designed to solve it.

Ready to put a powerful language model to work for you? With Whisper AI, you can instantly transcribe and summarize your audio and video content, turning hours of media into accurate, actionable text in minutes. Discover how Whisper AI can transform your workflow today.